Automating Fax Orders Through Leveraging OCR, Deep Learning, & Machine Learning

September 3, 2018

By Gaurav Goyal and Dharmesh Panchmatia

Companies are aiming for the moon with AI, but to really make a success of the technology for businesses, decisionmakers need to deploy it towards clear business problems. In this case study, Cisco Principal IT Architect Gaurav Goyal and Senior Director of eCommerce Dharmesh Panchmatia, explain how the firm deployed a trial-and-error approach to AI in order to solve a clear business problem - the need to manually process fax orders.

Problem Statement:

Cisco Commerce is one of the largest eCommerce Platforms in the industry and it is responsible for booking Cisco’s $40+ billion revenue annually. While bulk of orders are placed by users on the platform; we receive about $4 billion worth of orders through fax and emails which have to be entered manually by Cisco support teams.

We have a large task force for manual processing of these orders and processing of these orders can take anywhere from a few hours to a few days. This creates a stressful situation during quarter ends as a large number of orders have to be booked in a very short amount of time. The cost per order processing is also high due to the intensive manual process.

Our goal was to automate this manual process for at least 90% of the fax orders to improve the processing time by 99% and reduce support costs by about $1.7 million annually.

Approach:

We were naïve in the beginning and thought that with a combination of OCR and a rule-based approach, we would be able to address this problem quickly. We quickly realized our folly.

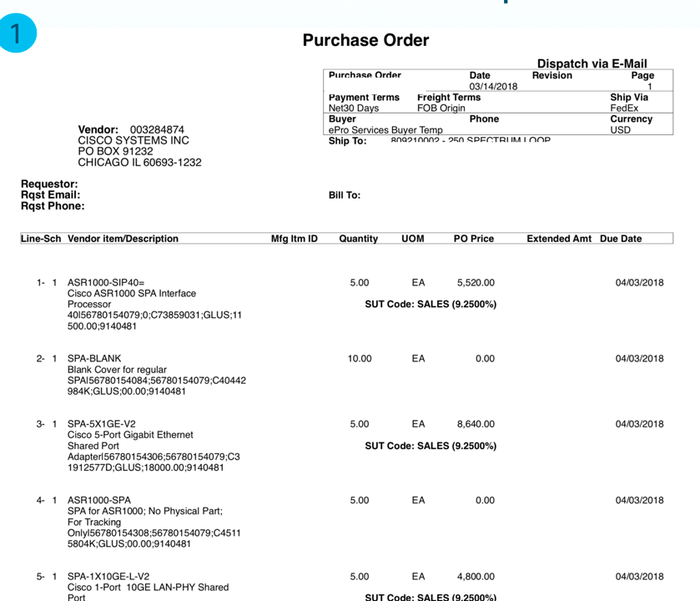

The challenge we ran into was that there was no standard template for fax orders. Every partner used a custom template for sending orders and these templates for the same partner changed dynamically over time. So, we were dealing with thousands of different templates which varied over time. Below, you can see one of the order templates we were dealing with:

We had already started experimenting with different machine learning and deep learning algorithms at this point so we thought we could apply some of these techniques to help us achieve our goal. We first started with machine learning techniques as they are the most cost effective.

Related: How To Not Waste Your Time, Money and Political Capital On AI Moonshots

Attempt: Machine Learning (spaCy) & OCR

In our first attempt, we decided to use spaCy (one of the open source Name Entity Recognition algorithms) to identify blocks of alphanumeric values and segment them based on labels we were expecting in the orders such as Billing Address, Item Name, Quantity, Unit List Price, Total Price, PO Number etc. Once the segmentation was complete, we leveraged OCR (Optical Character Recognition) for reading the information in the blocks and categorize them based on the labels we expected to find in the orders.

The approach worked very well for simple header level information such as PO number etc. but we ran into challenges with variable data such as customer addresses which could span anywhere from 3-5 lines. We augmented the above approach by leveraging Naïve Bayes algorithm to classify addresses and it worked very well to improve our accuracy for address detection.

But in spite of multiple trials and errors, we could not overcome the following problems:

Detection of cells within each line: Information about item purchased, their quantity was typically in a tabular format with each line specifying the details about the item purchased and each column specifying different item specific attributes such as quantity or price. While the algorithm could detect line boundaries very well, we ran into significant inaccuracies in identifying cells within each line.

Overlapping information across cells: The algorithm had low accuracy with orders where information overlapped between different cells within a line.

Orders in non-English languages: Order information in languages other than English also were not accurately detected.

Multi-page Orders: The algorithm was confused when it encountered multi page orders if the subsequent pages did not have column labels for lines.

The percentage accuracy from Machine Learning algorithms was about 60%, which fell significantly below our target, and hence we decided to experiment with Deep Learning algorithms.

2nd Attempt: Deep Learning(SSD/Faster RCNN), LabelIMG & OCR

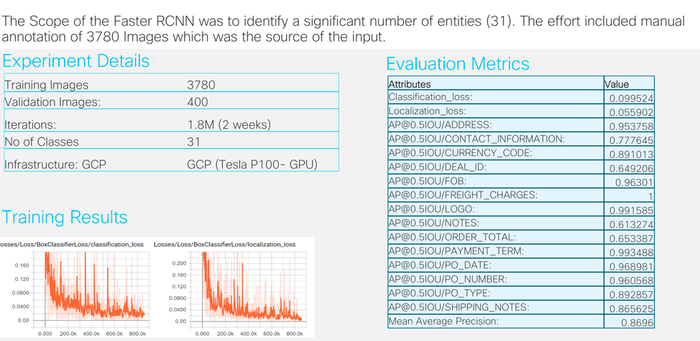

For deep learning algorithms, we needed processing power and hence we decided to leverage Google Cloud Platform to train our models. We used a ration of 80-10-10: 80% of data for training, 10% for x-validation and 10% for testing.

We decided to go with SSD (single shot detection) algorithm again as it is much faster to train and infer. We used Python labelIMG for graphical annotation of the orders to identify labels and blocks containing details for those labels. This was a time consuming process for 5000 order templates. Once the annotation was complete, we decided to use SSD for detecting the content for the labels. The approach worked fine for header level information but line level data was still an issue with this approach.

As the accuracy was not good, we experimented with Faster RCNN in leu of spaCy. Faster RCNN gave us better results for line detection. It gave very good accuracy for header level as well as for lines. It also handled overlaps and lack of line headers on multiple pages well. But the challenge was detection of content in cells within a line.

Even though detection of content within cells for each line was much better than SSD, we still ran into challenges for certain order templates. Cell level detail was good for many of the cells such quantity or price but it ignored the cell data for some of the lines. We did not have enough documents to train the model and after 1.8 million iterations, the algorithm started showing more losses. The image below shows the sample output of a Faster R-CNN run and accuracy for identifying each of the units.

Final Approach: Hybrid of Deep Learning(Faster RCNN), Machine Learning (Naïve Bayes/spaCy), LabelIMG & OCR

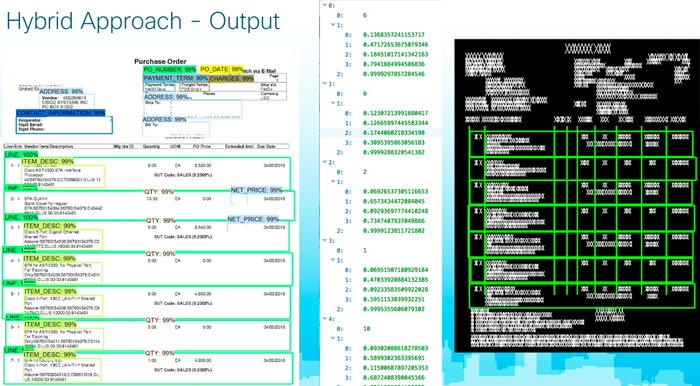

As we were not getting the desired accuracy either from deep learning or machine learning, we finally decided to go with a hybrid approach. The approach was as follows:

Leverage LabelIMG to annotate the order PDFs.

Run Faster RCNN – to identify the labels and cell boundaries. line level cells.

Super impose output of deep learning cells and put it on Machine Learning segmentation approach.

Machine Learning takes cell information identified in some lines by Faster R-CNN and applies it to find cell information on missing lines.

With this approach our accuracy jumped from 70% to 85+%.

The hybrid flow and a sample output from the hybrid approach is shown below:

Conclusion:

We are going to use a combination of deep learning and machine learning to automate manual fax ordering process. The hybrid approach is giving us 85+% accuracy and even for the remaining 15% of orders it cannot automatically create, the bulk of the information is captured resulting in very little manual effort to correct the order. It took us a while to figure out the final approach but we have finally achieved the accuracy we wanted and it will help us save about $1.7 million dollars annually and improve our order processing time by 99%.

To find out more about how Cisco are utilising the power of deep learning and machine learning, catch up with their team at this month's AI Summit San Francisco. Find out more

Dharmesh Panchmatia is Sr. Director at Cisco responsible for architecture and engineering of eCommerce Platform. Dharmesh has over 2 decades of software development and stakeholder management experience and has worked for HP and NEC prior to Cisco. Dharmesh has played a key role in transforming a struggling eCommerce (CPQO - Configure, Price, Quote & Order) platform to make it one of the largest eCommerce platforms in the industry today.

Gaurav is a Principal Architect for Cisco, based out of San Jose, CA. In his role, Gaurav leads the entire e-Commerce ordering platform for Cisco, focusing on Java/J2EE, MongoDB, Elastic Search, Kafka chatBOT, Machine Learning and other related technologies. His areas of expertise include enterprise data design, systems integration, migrating native applications to the cloud. Gaurav likes to take a pragmatic approach toward delivering software, with a focus on building highly scalable, fault-tolerant and highly supportable applications.

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)