Highlights from the conference

The Google I/O conference this week was packed with product launches and prototypes. For those who missed the 2-day event, here are some highlights among the many announcements made by the search giant.

Quick analyst's take: "Overall, we did not see any drivers of meaningful revenue upside, but we continue to be impressed with Google's AI/ML-driven technology innovation," wrote BofA Global Research analyst Justin Post, in a report.

AI Test Kitchen and LaMDA 2

Google is expanding its conversational AI capabilities with LaMDA 2, its generative language model trained specifically to converse in a free-flowing way. LaMDA stands for Language Model for Dialogue Applications.

The first iteration, LaMDA, was opened up to thousands of Google engineers who tested and improved it. This led to LaMDA 2. In this spirit, Google created AI Test Kitchen where a select, broader community can experiment with LaMDA in conversational AI.

LaMDA2 was trained on Google’s Pathways Language Model (PaLM), which has 540 billion parameters.

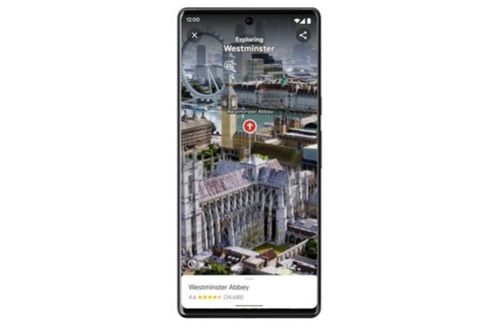

More realistic Google Maps

With 3D mapping and AI/ML, Google is combining aerial and street level images into Maps to create a more realistic view of a location. Called ‘immersive view,’ the images are not captured by drone. Rather, Google is using ‘neural rendering’ to create the view from only images. This feature is coming in select cities globally later in 2022.

Figure 1:

Google Docs’ automatic summary

Didn’t read the report ahead of the meeting? Google has a tool for that. Using AI/ML for text summarization, Google Docs can now automatically look through the content and retrieve the main points.

“This marks a big leap forward in natural language processing,” said Alphabet and Google CEO Sundar Pichai, in a blog. “Summarization requires understanding of long passages, information compression and language generation, which used to be outside the capabilities of even the best machine learning models.”

This feature is coming to Google Chat in coming months and soon to Google Meet. Google Meet also will use ML-powered image processing to improve image quality, which will work on all types of devices.

Figure 3:

Expanding to 10 skin tones

Google will be incorporating into its products the Monk Skin Tone (MST) Scale, which is a 10-shade scale developed by Ellis Monk, a Harvard professor and sociologist who studied skin tone and its effect on people’s lives.

Multisearch = Local + Scene

Multisearch lets users go beyond a text search: They can look for images and text at the same time. Through the Google app, users tap the Lens camera icon to search a screenshot or a take a photo of something they would like to find – such as a felt hat.

Google is adding a new feature to Multisearch – local information. Available later this year in English, the feature lets you search via a photo or screenshot and add ‘near me’ to find local retailers, restaurants and other listings that might have what you need.

For example, if you see a Nepalese dish online you would like to try but do not know what it’s called, Google can search millions of images to yield results of nearby restaurants that offer it.

Figure 4:

Another coming new feature is called ‘scene exploration.’ When you use Google Lens to pan a number of items, you can get information on a whole group of items at the same time instead of having to individually scan each.

Figure 2:

No more 'Hey Google'

Google Assistant is removing one pain point for users: the need to say ‘Hey Google’ first before it activates the service. Users only need to look at the device using Google Assistant and start talking. For now, it is only available on the Google Nest Hub Max.

Glasses that can translate in real time

Google is testing an early prototype glasses – and they look like ordinary glasses, not like the original Google Glass – that can translate languages in real time through AR.

The person who wears glasses can see a translation of what is being said right in the lens of the glasses, for instant comprehension. Google did not give a timeline for its release.

Google Translate adds 24 more languages

Google seeks to add more languages to its roster in Translate, especially those that are under-represented online. Translating is hard to do because models are trained with bilingual text, such as pairs in English and Chinese. There are not enough publicly available linguistic pairs to train on for all languages.

But using advanced machine learning, Google developed a ‘monolingual’ way to translate languages even without training pairs − by working with native speakers and institutions to develop the translations. The new languages are Assamese, Aymara, Bambara, Bhojpuri, Dhivehi, Dogri, Ewe, Guarani, Ilocano, Konkani, Krio, Kurdish (Sorani), Lingala, Luganda, Maithili, Meiteilon (Manipuri), Mizo, Oromo, Quechua, Sanskrit, Sepedi, Tigrinya, Tsonga, Twi.

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)