The success of MT-NLP will make large AI models ‘cheaper and faster to train,’ the pair claims

Microsoft and Nvidia have joined forces to create what they claim is the world’s largest and most powerful monolithic transformer-based language model.

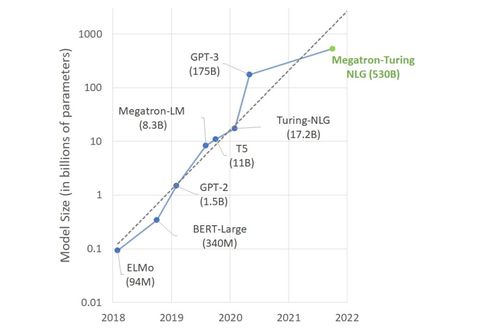

Dubbed Megatron-Turing Natural Language Generation (MT-NLP), it contains 530 billion parameters – far outmatching OpenAI’s famous GTP-3 and its 175bn.

The companies claim their model achieves “unmatched” accuracy across a plethora of natural language tasks, including natural language inferences and reading comprehension.

“The quality and results that we have obtained today are a big step forward in the journey towards unlocking the full promise of AI in natural language,” reads a blog post authored by Ali Alvi, group program manager of Microsoft’s Turing team, and Paresh Kharya, senior director of product management and marketing for accelerated computing at Nvidia.

“We look forward to how MT-NLG will shape tomorrow’s products and motivate the community to push the boundaries of NLP even further.”

What is MT-NLP?

MT-NLP is the successor to Microsoft’s Turing NLG 17B and Nvidia’s Megatron-LM language models.

To train the latest version, the development team used 15 datasets consisting of a total of 339bn tokens from English-language websites. This was later whittled down to 270bn tokens.

That datasets mostly originated from The Pile, a bevy of smaller open source datasets available to researchers, which was then curated and compiled alongside webpages obtained via Common Crawl.

MT-NLP’s training took place on Nividia’s Selene ML supercomputer – which is comprised of 560 DGX A100 servers, each containing eight A100 80GB GPUs.

Given the sizable costs associated with training these types of models, the companies used DeepSpeed, an open source deep learning library which allows users to optimize data when training NLP models.

“By combining tensor-slicing and pipeline parallelism, we can operate them within the regime where they are most effective,” the blog post reads.

“More specifically, the system uses tensor-slicing from Megatron-LM to scale the model within a node and uses pipeline parallelism from DeepSpeed to scale the model across nodes.”

What can it do?

MT-NLG can auto-complete sentences, read and deduct commonsense reasoning.

The researchers claim it conducts its tasks with little to no fine-tuning.

It has three times the number of parameters compared to the largest existing model of this type, Alvi and Kharya wrote.

The data and results will "benefit existing and future AI model development and make large AI models cheaper and faster to train,” they said.

How does it stack up against the competition?

MT-NLG faces stiff competition for the title of the world’s largest language model.

Chinese academics claimed to have built the world’s largest language model back in June. Their WuDao 2.0 model, unveiled at the 2021 Beijing Academy of Artificial Intelligence (BAAI) Conference, had 1.75 trillion parameters.

Google Brain previously developed an artificial intelligence language model with 1.6 trillion parameters, using something called Switch Transformers. Neither of these two were monolithic transformer models, preventing a meaningful ‘apples-to-apples’ comparison.

In April, Chinese tech giant Huawei unveiled what it called the world’s largest Chinese NLP model, Pangu NLP, trained with 207bn parameters.

Meanwhile, South Korean internet giant Naver debuted a new AI platform in June, based on 204bn parameters.

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)