Such systems ‘should never be used without cause and need’

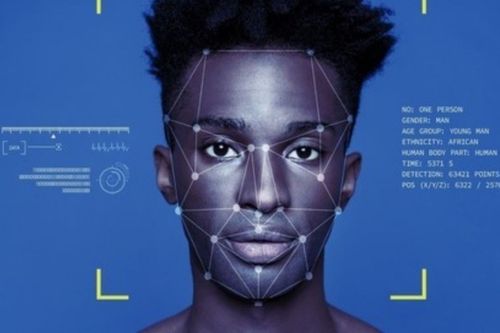

The World Economic Forum and INTERPOL have outlined a series of best practices for law enforcement agencies using facial recognition technologies (FRT).

The practices were outlined in a white paper titled Responsible limits on facial recognition, use case: Law enforcement investigation.

The Center for AI and Robotics of the UN Interregional Crime and Justice Research Institute (UNICRI), and the Netherlands police were among the paper’s authors.

The document covers a series of example investigations and applications where law enforcement agencies might deploy facial recognition – such as fighting child abuse and looking for terrorists in public spaces.

It includes a set of principles that define what constitutes the responsible use of facial recognition in criminal investigations. It also stresses the proportional use of such systems – stating that the decision to deploy “should always be guided by the objective of striking a fair balance between allowing law enforcement agencies to deploy the latest technologies… and the necessity to protect the human rights of individuals.”

“As a general principle, FRT should never be used without cause and need that otherwise would undermine human and fundamental rights,” the paper reads.

It features a self-assessment questionnaire detailing the requirements that law enforcement agencies must respect to ensure compliance with the principles for action.

“Around the world, law enforcement agencies are rapidly adopting facial recognition technology as part of their investigations, but this comes with risks for citizens,” said Kay Firth-Butterfield, head of AI and machine learning at the WEF.

“This is the first global multi-stakeholder effort to mitigate these risks effectively.”

Governance challenges

"Around 1,500 terrorists, criminals, fugitives, persons of interest, or missing persons have been identified since INTERPOL began using facial recognition systems in 2016," revealed Cyril Gout, the agency's director of operational support and analysis.

“We will support its implementation through our global police network to increase awareness of this important biometric technology.”

Remote biometric identification technologies – and facial recognition in particular – have gained a lot of traction in law enforcement, and FRT accuracy has improved significantly in recent years. However, incorrect implementation of this tech can result in abuses of human rights and harm to citizens, particularly those in underserved communities.

Dutch police will begin testing the self-assessment questionnaire proposed in the white paper in early 2022. “Building and maintaining trust with citizens is fundamental to accomplish our mission and we are well aware of the various concerns related to facial recognition,” said Marjolein Smit-Arnold Bik, head of the country’s special police operations.

“Being the first law enforcement agency to test the self-assessment questionnaire is a means to reaffirm our commitment to the responsible use of facial recognition for the benefits of our community.”

The new framework is designed to address gaps in professional training for such systems, and the procurement process, the authors said.

The EU’s biometric ban

Under recently proposed EU legislation, law enforcement agencies would be prohibited from using remote biometric identification systems in publicly accessible spaces without clear reason. The draft text describes plenty of exhemptions: such systems could still be used to “search for a missing child, to prevent a specific and imminent terrorist threat or to detect, locate, identify or prosecute a perpetrator or suspect of a serious criminal offense.”

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)