Facial Recognition AI Used To 'Detect' Sexual Preference - Researchers Face Human Rights Backlash

September 14, 2017

Last week, the Internet was ablaze with justifiable concern—and even outrage—regarding the implications of new research from Stanford University. The paper, entitled ‘Deep neural networks are more accurate than humans at detecting sexual orientation from facial images’, aimed to outline the ways in which facial recognition tools could be used to profile ‘intimate psychodemographic traits’ such as sexuality.

Using a deep neural network (DNN), Michal Kosinski and Yilun Wang demonstrated that off-the-shelf machine learning tools could be utilised to invade people’s privacy.

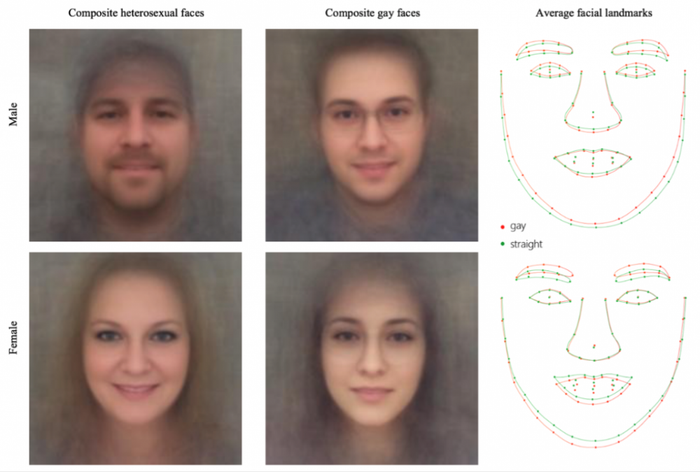

“A DNN was used to extract features from the facial images of 35,326 gay and heterosexual men and women,” the report explains. “These features were entered (separately for each gender) as independent variables into a cross-validated logistic regression model aimed at predicting self-reported sexual orientation. The resulting classification accuracy offers a proxy for the amount of information relevant to the sexual orientation displayed on human faces.”

The authors claim they wanted to find out if computer vision algorithms could be used to detect the intimate traits of individuals beyond which the human eye can detect: “This work examines whether an intimate psycho-demographic trait, sexual orientation, is displayed on human faces beyond what can be perceived by humans.”

By using a single facial image, the classifier could correctly distinguish between gay and heterosexual men in 81% of cases, and in 74% of cases for women. Given five facial images per person, the accuracy of the algorithm increased to 91% and 83% respectively. The researchers claim their classifier detected patterns of subtle differences in facial structure by sexuality, thereby supporting the claim that sexuality is biologically influenced. “Consistent with the prenatal hormone theory of sexual orientation, gay men and women tended to have gender-atypical facial morphology, expression, and grooming styles.”

Two prominent US LGBTQ groups, The Human Rights Campaign (HRC) and Glaad, immediately denounced the study, dismissing it as “dangerous and flawed… junk science”. They criticised the findings for placing LGBTQ people around the world at risk, as well as for excluding people of colour, bisexuals, and transgender people—claiming that the research made broad and inaccurate assumptions about gender and sexuality.

Commentators meanwhile claimed that the research raise “the nightmarish prospect” of authoritarian governments scanning people’s faces to determine their sexuality in order to persecute them.

However, the report itself addresses these criticisms. “Some people may wonder if such findings should be made public lest they inspire the very application that we are warning against. We share this concern. However, as the governments and companies seem to be already deploying face-based classifiers aimed at detecting intimate traits, there is an urgent need for making policymakers, the general public, and gay communities aware of the risks that they might be facing already. Delaying or abandoning the publication of these findings could deprive individuals of the chance to take preventive measures and policymakers the ability to introduce legislation to protect people.”

“The laws in many countries criminalize same-gender sexual behaviour, and in eight countries—including Iran, Mauritania, Saudi Arabia, and Yemen—it is punishable by death. It is thus critical to inform policymakers, technology companies, and most importantly, the gay community, of how accurate face-based predictions could be.”

The authors go on to argue:

“We did not create a privacy-invading tool, but rather showed that basic and widely used methods pose serious privacy threats. We hope that our findings will inform the public and policymakers, and inspire them to design technologies and write policies that reduce the risks faced by homosexual communities across the world.”

There still remains important ethical concerns regarding the research, however. Its implications for the broader research approach to gender and sexuality studies remain unclear, while the study could be criticised for deliberately relying on biased training data that inherently divides men and women according to a strict binary of sexuality.

The research comes at a critical juncture in the development of facial recognition technology, adding to a growing list of ethical and political concerns about its deployment. Facial recognition has been used by the US government to arrest more than 4,000 people since 2010, while other reports demonstrate that facial recognition could soon be used to identify protestors wearing hats, scarves, or even masks. The researchers claim that their findings “expose a threat to the privacy and safety of gay men and women”; only time will tell whether or not the research will lead to greater protections for vulnerable groups.

To find out more about Stanford’s AI research, you can read our interview with Executive Director of Strategic Research at Stanford University’s Computer Science Department.

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)