Intelligent automation and society

November 15, 2019

by Fiona J. McEvoy 15 November 2019

We are now at the beginning of a new human epoch, and the next few decades will be defined by the way automation transforms our day-to-day lives. Nowhere will these changes be felt more acutely than in the workplace, where a whole gamut of historically human tasks will be intelligently mechanized in an effort to cut costs, increase efficiency, hasten results, and free up human capital for more complex, explicitly cognitive projects.

As with previous industrial revolutions, the promise of an economic boon for business - coupled with a relief from heavy or repetitive duties for workers - prima facie justifies the energy and enthusiasm with which we’ve greeted this new technological era. But it would be naive to embrace a wholesale roll-out of automation without some scrutiny, and the pace of tech development rarely leaves time to reflect in the rear view mirror until we are a good distance away.

So, before we’re too far down the track, we must ask: What will an automated future mean for our society? For individuals? For the workplace? These questions are taxing and difficult to agree, but they are nonetheless critical. Indeed, the ethical application of technology is now at the very center of public conversations about future economic and societal stability, and businesses are slowly taking heed, acknowledging that their decisions and considerations should extend beyond pure commercial objectives to broader concerns about how tech deployment shapes human lives.

The balance

While we know AI has the capacity to create much good, we also know that devastating repercussions could issue from its reckless or ill-considered use. It is never too early to begin to anticipate and mitigate negative downstream effects and pernicious unintended consequences.

In contemplating such broad philosophical queries, thus far most column inches have been dedicated to the “future of work” itself. Thought leaders have been busy asking what will become of human workers when machines are able to complete duties - whether in the factory or the office - with greater speed and accuracy. They’ve also been ruminating on the potential of automation to disrupt and reinvent entire industries, relegating old ways of doing things and consigning certain skills to history. Concerns about mass unemployment have been thoughtfully articulated, and so have proposals about the welfare provisions that will be needed should automation become ubiquitous, as is predicted.

At the same time, some are considering what perpetual worklessness might mean for our psychology and the human condition. And asking if we are eagerly driving toward a future that sees the best of us stripped of our skills, dependent upon machines, and suffering from depression due to lack of purpose? This is dystopian take of course, and a disputed one. Many counter-argue that the relinquishment of certain tasks to machines will actually serve to unlock precious time, allowing humans to rise to new challenges and think more creatively, elevating soft skills and placing more value on the liberal arts. Moreover, they add that an automated future could be the very shift we need to correct the current, crippling work-life balance found in fast-paced economies.

Only time will tell us the fate of our human toil, including which occupations will be spared and what new kinds of work will emerge. But we would be mistaken to think that is the only ethical consideration worth our time when it comes to our automated future. On the contrary, as we delegate tasks and decisions that have, until now, been the sole purview of human judgment, we place increasing faith in unthinking, data-driven systems that may “know” (i.e. spot patterns, identify correlations, and make predictions) but are long way from being able to “understand.” And with trust, comes risk.

Pride and prejudice

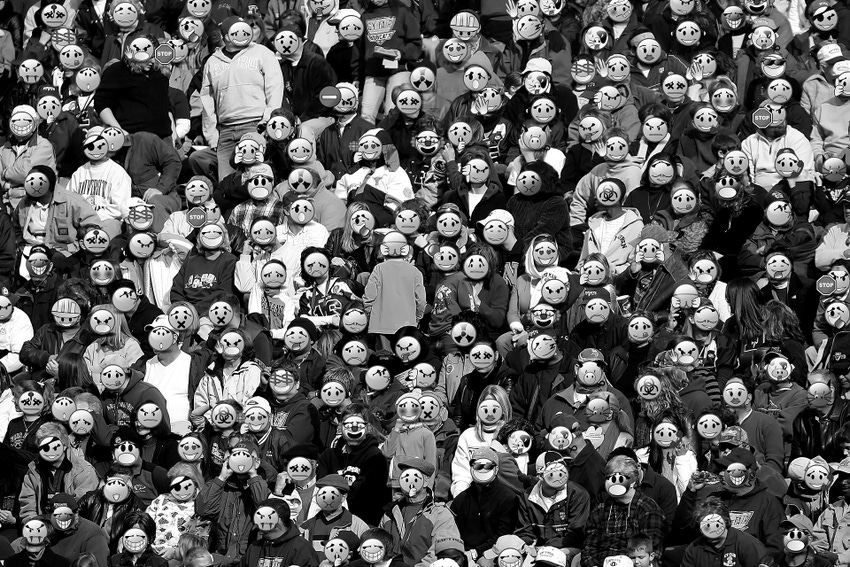

One great concern focuses on automated decision systems that are used in the public domain. Consider technology that assists with hiring, lending, education admissions, policing, and the distribution of public resources, to cite just a handful of examples. How can we ensure that the socially significant judgments they facilitate aren’t biased or skewed? After all, automated systems usually only predict that the future will mirror the past, and if training data was poorly collected or is unrepresentative, there is a very real danger of entrenching and propagating implicit wrongs at speed and scale.

And then we must consider the humans that built these systems and defined their success conditions. What were their unconscious (or conscious) prejudices and predispositions? It has been said often that “algorithms are opinions embedded in code”, and it is so important to remember that no operating technology is completely neutral.

To give a brief example, if an algorithm learns what a “good hire” looks like for your organization based on data that reflects past recruitment biases or a preference for a particular gender, ethnicity, or university, then it could unfairly screen out good applicants who do not fit the predetermined mold. This kind of discrimination isn’t necessarily based on malice, but rather the unthinking system’s propensity to predict that future success looks the same as it always did. Any automated decision system that is trained with data that is laden with historical prejudices or bygone political or social attitudes is liable to take those on.

So what is the solution? Well, it isn’t quite that easy. To reiterate, there is no such thing as a completely unbiased and neutral system. All data, like all people, are biased.

In the first instance, what is most critical is that those deploying automated systems are alive to the possibility of those systems making ethically poor attributions. Beyond that, it is critical that algorithmic decision-making is transparent and explainable, so that the subject of any system-driven decision can clearly see the factors that contributed to the result. But more than this, it is important that companies regularly interrogate their data for bias, and conduct an ongoing review of the decisions issued by their systems. This means making sure that there are always human eyes evaluating, spot-checking, and auditing. Only by using our superior semantic knowledge and understanding can we ensure that fast, smart automated systems work to our economic and societal benefit.

Though over the next few decades, machine automation may well take-on a number of tasks and processes that now lie with the current workforce, and it has been said that “anywhere we find a human being is a market for AI”, but understanding what fairness looks like is a truly complex matter and the role of watchful arbiter must, at least for now, rest with humankind.

Fiona is an AI ethics writer and founder of YouTheData.com. Fiona was named one of the 30 Women Influencing AI in San Francisco by RE•WORK.

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)