October 16, 2018

REDMOND, WA - AI Business reported last week that Google are to drop out of a competition bid for a lucrative $10 billion USD cloud computing contract with the Pentagon. Now, Microsoft employees have signed an open letter calling for their company to do the same.

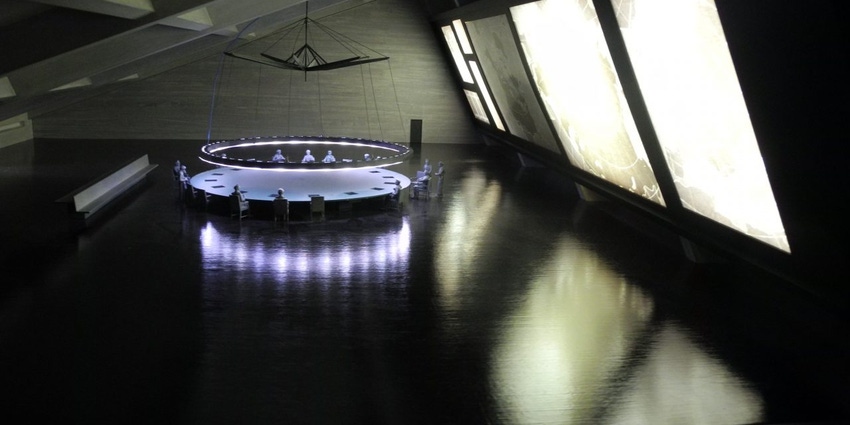

The project, known as JEDI – i.e. the Joint Enterprise Defense Infrastructure cloud – involves shifting huge amounts of US Defense Department to the commercial cloud. Bids were to be submitted by October 12th for the ten year contract. Last week, Google announced that, following sustained internal pressure around the use of their AI technology by the U.S. military, they would not pursue the contract.

However, last Tuesday, Microsoft announced they would be submitting a bid - and on Friday, employees of the company signed an open letter in protest of the decision.

"Microsoft, don't bid on JEDI" - Excerpts from the open letter

"We joined Microsoft to create a positive impact on people and society, with the expectation that the technologies we build will not cause harm or human suffering," the letter reads. "Many Microsoft employees don't believe that what we build should be used for waging war. When we decided to work at Microsoft, we were doing so in the hopes of empowering every person on the planet to achieve more,' not with the intent of ending lives and enhancing lethality."

Taking clear inspiration from Google's example, the letter continues: "For those who say that another company will simply pick up JEDI where Microsoft leaves it, we would ask workers at that company to do the same. A race to the bottom is not an ethical position. Like those who took action at Google, Salesforce, and Amazon, we ask all employees of tech companies to ask how your work will be used, where it will be applied, and act according to your principles."

"Recently, Google executives made clear they will not use artificial intelligence "for weapons, illegal surveillance, and technologies that cause 'overall harm'. [...] With a large number of workers vocally opposed, executives were left with no choice but to pull out of the bid."

"So we ask, what are Microsoft's A.I. Principles, especially regarding the violent application of powerful A.I. technology? How will workers, who build and maintain these services in the first place, know whether our work is being used to aid profiling, surveillance, or killing?"

Responsible AI?

The notion of responsible AI has been a huge topic of conversation within the machine learning space in 2018, with organizations the world over looking for ways to reassure their users and customers that the AI they are using is safe, ethical, and accountable.

Whatever the outcome of the open letter, it's clear that tech workers are taking an increasing stand against management to ensure AI, cloud, and other technologies are used for good - not evil. Perhaps it's this kind of direct action from within tech companies themselves that will keep responsibility at the top of the AI agenda. However, only time will tell whether real responsibility becomes enshrined within the tech and AI worlds.

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)