Warner Music Group backed the startup behind the songs

At a Glance

- Music streaming platform Spotify has removed tens of thousands of AI-generated tracks from a music startup.

- Boomy reportedly used bots to artificially inflate its audience. Universal Music Group complained about its tracks.

Spotify took down tens of thousands of AI-generated songs as it fights the rising tide of fake streams on its platform.

The FT reported that the music streaming site removed tracks uploaded by Boomy, a startup that offers AI-powered music generation tools.

However, the streams that Spotify took down made up only 7% of songs Boomy’s users had uploaded. The songs were reportedly removed because bots were being used to artificially inflate audience numbers for tracks.

Boomy said on Discord that following an investigation into “potentially anomalous activity,” Spotify has restored its ability to publish on the site.

“As the music industry continues to navigate the use of bots and other types of potentially suspicious activity, these pauses are likely to happen more regularly and across a wider set of platforms,” according to Boomy.

According to FT, recording giant Universal Music Group had complained about Boomy’s tracks. A complaint from Universal Music about Boomy comes after it threatened music streaming platforms that it would sue if they were found to be hosting AI-generated music.

AI Business contacted Universal Music for comment.

Why Boomy matters

Based in Berkeley, California, Boomy was founded in 2018 and to date has raised $4.5 million in funding, with the likes of Sound Media Ventures and Warner Music Group among its backers.

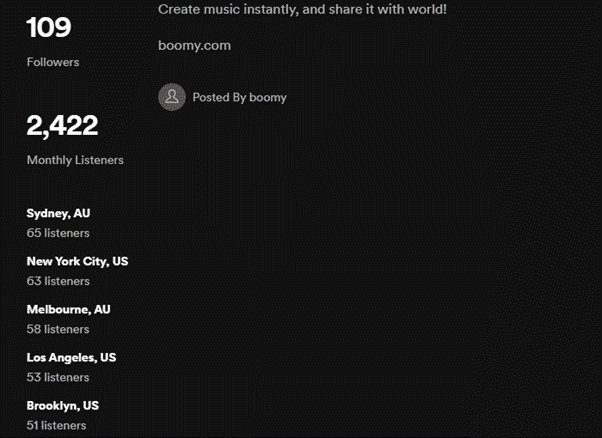

Boomy’s Spotify discography contains content posted only in 2022 – with one album titled ‘500 Ways to Have Fun’ − aptly having 500 songs, the most listened to of which has almost 60,000 plays.

Spotify pays artists between $0.003 to $0.005 per stream on average, according to Ditto Music, meaning Boomy could have earned around a maximum of $300 for just that one song. The more a song is streamed, the more the artist makes.

According to Boomy’s Spotify profile, it has 109 followers and just 2,422 monthly listeners.

Copyright issues remain unresolved

The impact for a rightsholder like Universal Music would mean that new content is being generated potentially using its artists’ music without permission – essentially alleged copyright infringement.

AI music-generation tools work this way: Developers feed existing content into the model, which then generates something new using the input data as a reference.

Since most popular music is copyrighted, it could constitute infringement if a company like Boomy were to use music from popular artists to train their models. However, no legal precedent has been set to say such behavior constitutes infringement. No global intellectual property office or copyright registry has set rules on this, either.

The lack of clarity has not deterred UMG, which already made legal threats against an AI-generated track of Eminem’s voice rapping about cats on YouTube, as well as a second song on Spotify and other music streaming sites featuring replicated vocals of Drake and The Weeknd.

UMG joins companies such as Getty Images in its legal crusade against generative AI models for alleged copyright infringement.

Read more about:

ChatGPT / Generative AIAbout the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)