Klaus Haller talks customized AI, framework layers and AI-specific hardware

January 25, 2022

The opportunities to invest in artificial intelligence (AI) are plentiful, and so is the risk to waste money on ideas without long-term impact on the organization.

What is your answer to a data scientist who suggests transforming your business with GPT-3? GPT-3 is probably the first algorithm - more precisely a language model - covered widely in the popular press. Microsoft invested one billion dollars to control this new technology [Sch20].

But how does GPT-3 relate to your business? What answer do you give your data scientists – or to ten hard-core sales managers trying to get your money by proposing even better projects and algorithms?

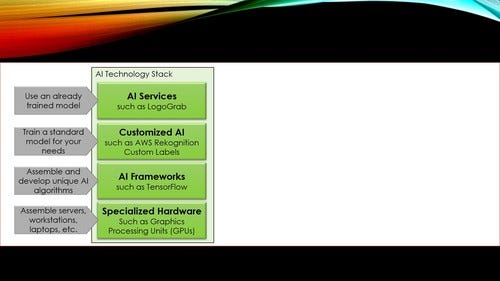

AI has many facets. It can be seen as a technology stack with various layers. Understanding them and identifying the relevant ones for innovation within your organization enables you to judge whether a project or technology a supplier, an external consultant or an in-house data scientist suggest aligns to the business and IT strategy of your organization.

Technology stacks are widely used for structuring technologies in a particular area, e.g., the Java world. A Java backend application runs code in Java VMs. A Java VM runs on a hypervisor, which runs on an operating system, which runs on physical hardware.

There are similar layers in the world of AI (Figure 1). The lowest layer is the hardware layer. Neural networks, for example, require many, many matrix multiplications. Normal CPUs can perform them, but there are faster hardware options: Graphical Processing Units (GPUs), Field Programmable Gate Arrays (FPGAs), or Application Specific Integrated Circuits (ASICs) [Rob20].

Innovation on this layer comes from hardware companies such as AMD or NVIDIA. Companies buying such innovative products are server and workstation manufacturers or public cloud providers directly because they need solutions for data centers with AI-rich workloads.

The AI frameworks layer is next. It represents frameworks useful for machine learning and AI.

TensorFlow is a well-known example. TensorFlow distributes the workload for training huge neural networks using large and heterogeneous server farms thereby taking advantage of their computing power [ABC16].

TensorFlow abstracts from the underlying hardware. It parallelizes autonomously computing tasks. Data scientists can run the same code and learning algorithms on a single laptop or a cluster with hundreds of nodes with hardware tuned for AI. Data scientists do not have to change their code for different hardware configurations. This saves them a lot of work and effort.

Companies use AI frameworks to develop new AI algorithms and neural network designs. So, if a company wants to develop the next big thing after GPT-3 or a fundamentally new computer vision algorithm, their data scientists use an AI framework to engineer and test new algorithms.

In other words: even they do not innovate on this layer but can work on top of the existing frameworks.

There are only two main scenarios for innovation in this layer, i.e., for improving an existing framework such as TensorFlow or developing a completely new one: academic research or outreach to a massive number of data scientists. The latter applies to public cloud providers or AI software companies who want to lure data scientists into using their platform by offering superior features.

Figure 1: The AI Technology Stack

Next is the customized AI layer. Its specifics are easily understood after a discussion of the top layer, the AI services layer. AI Services enable software engineers to incorporate AI into their solution by invoking ready-to-use AI functionality. The engineers do not need any AI or data science know-how. One example is Visua / LogoGrab.

The service detects brand logos in images or video footage [Vis]. It helps to measure the success of marketing campaigns, e.g., by checking how often and for how long the brand of a sponsor is on TV during a sports event. It finds counterfeits and look-alikes of brand products on the internet and electronic marketplaces as well. LogoGrab is an example of a highly specialized AI service.

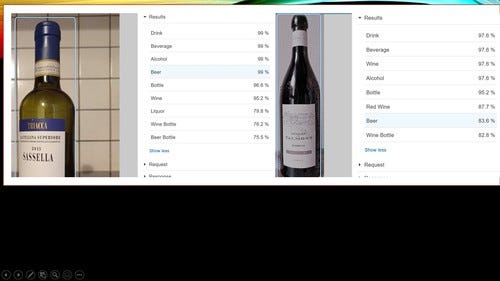

There are also ready-to-use AI services with a broader focus. Examples are AWS Recognition or Amazon Comprehend Medical. The latter service analyzes patient information and extracts, e.g., data about the patient itself and its medical condition. AWS Recognition supports use cases such as identifying certain generic objects on pictures such as a cor or a bottle. Do you see more customers drinking beer, white wine, or red wine? Marketing specialists can feed festival pictures to the AWS service, let the service judge what is on the picture, and prepare statistics. Knowing that 80% of the festival visitors drink wine, 15% champagne, and just 5% beer might be a red flag for a brewery when deciding whether to sponsor this festival.

Figure 2: AWS Recognition with standard labels. In this example, the service determines reliably that this is a bottle. The service struggles with the left one (it is a half-size bottle) and thinks it is more probably to be beer than wine. On the right side, the service even detects that this is a red wine bottle.

Companies can innovate quicker when building on existing AI services such as LogoGrab. They simply integrate a suitable service into their processes and software solutions. They have no effort for managing an AI project, no project-risk, and no delay when the project does not deliver on time.

In many cases, generic AI services are not sufficient for a concrete application scenario. What should a marketing specialist do if she needs to understand whether there is Swiss wine on a picture, other wine, or other drinks? She needs a neural network tailored and trained for her specific niche.

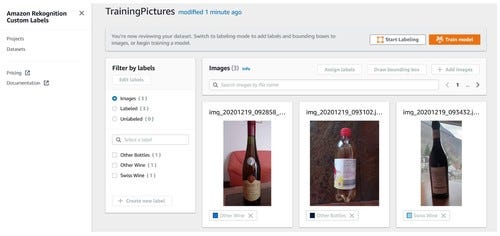

As stated, AWS Recognition comes with a set of standard object types the service can identify e.g. on pictures. However, AWS Recognition is much more powerful. It enables engineers to train their specific neural networks to detect object types specific to their needs. The engineers have to provide sample pictures, e.g. for Swiss wine bottles. Then, AWS Recognition Custom Label trains a machine learning model for these customer-specific objects.

This is one example of services forming the Customized AI layer. They train and provide ready-to-use customer-specific neural networks based on customer-delivered customer-specific training data. In Figure 3, a marketing specialist for Swiss wine might be interested in understanding whether festival visitors prefer Swiss wine, other wine, or prefer other drinks. So, she prepares training and test data with pictures labeled for these three types of drinks.

When pushing the “train model” button, AWS generates the neural network without any further input and without requiring any AI knowledge.

Figure 3: Preparing training and test data sets in AWS Rekognition Custom Label (console view). Data scientists can upload pictures and label them.

Customized AI is intriguing for companies that want to optimize specific business process steps to gain a competitive advantage.

Thanks to customized AI, they achieve such goals without a large data science team. They can use cameras to check products on the assembly line for flaws in the production process, collect sample pictures for products that can and cannot be shipped to the customer, and let the customized AI service train a neural network. Such services are offered by the typical suspects: cloud providers and analytics and AI software vendors.

AI Services, Customized AI, AI Frameworks, and AI-specific hardware – AI innovation comes in many forms. For innovation, companies and organizations rely mostly on university-trained data scientists. They know the AI frameworks layer quite well.

This is the layer interesting for academic researchers, not necessarily the layer in focus for organizations and companies. Thus, companies require a strategy on how and on which layer they want to innovate with AI or innovate the field of AI. Managers must communicate this strategy clearly and in a motivating way.

Our four-layer AI technology stack can be the major concept for the understanding of and communication about AI-driven innovation in your company and organization, fostering the collaboration of your managers and data scientists.

Klaus Haller is a senior IT project manager with in-depth business analysis, solution architecture, and consulting know-how. His experience covers data management, analytics and AI, information security and compliance, and test management. He enjoys applying his analytical skills and technical creativity to deliver solutions for complex projects with high levels of uncertainty. Typically, he manages projects consisting of 5-10 engineers.

Since 2005, Klaus works in IT consulting and for IT service providers, often (but not exclusively) in the financial industries in Switzerland.

References

[ABC16] M. Abadi, et al.: TensorFlow: A System for Large-Scale Machine Learning, 2th USENIX Symposium on Operating Systems Design and Implementation (OSDI ’16), November 2–4, 2016, Savannah, GA, USA

[Rob20] AI Hardware – What they are and why they matter in 2020, Robotbiz.com, no author, https://roboticsbiz.com/ai-hardware-what-they-are-and-why-they-matter-in-2020/, last retrieved Nov 11th, 2020

[Sch20] Ronald Schmelzer, GPT-3 AI language model sharpens complex text generation, 22.10.2020, https://searchenterpriseai.techtarget.com/feature/GPT-3-AI-language-model-sharpens-complex-text-generation

[Vis] LogoGrab is Now VISUA, https://visua.com/logograb-now-VISUA, last retrieved Nov 23th, 2020

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)