OpenAI is working on a new open-source language model as competition from free AI models proliferate. Also, Meta's open source strategy behind LLaMA.

At a Glance

- OpenAI reportedly is developing a new open-source language model as competition from free AI models proliferate.

- Meta's release of LLaMA to academics led to a flurry of cheaper, smaller language models on par with ChatGPT.

- Google engineer had warned about open source language models eating the lunch of Google and OpenAI.

OpenAI is working on a new open-source language model to be released to the public, according to The Information.

While not much is known about the model, the news outlet said OpenAI is unlikely to release a model that will be a true competitor to GPT, which it charges developers to use. OpenAI did release its first two GPT versions as open source but it now has investors looking for a return, notably Microsoft.

However, OpenAI releasing an open source model could diffuse some of the competition that has surged of late from free AI models.

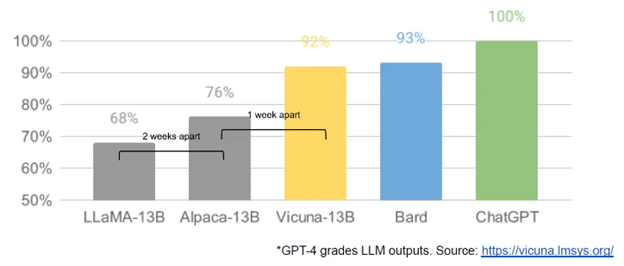

In March, Meta’s release of a robustly capable foundation model called LLaMa to academics set off a flurry of development. Within weeks, researchers created ChatGPT-like alternatives with similar performance. Other open-source language models developers could tap include those from LAION, Databricks and Stability AI, the maker of Stable Diffusion.

The open source threat

This bounty of open source models even led a Google engineer to warn that Google and OpenAI will fall behind if theirs stay proprietary.

“We aren’t positioned to win this arms race and neither is OpenAI,” wrote Luke Sernau, according to a leaked snapshot of his internal chat posted on Discord and republished in SemiAnalysis. “While we’ve been squabbling, a third faction has been quietly eating our lunch.”

“Things we consider ‘major open problems’ are solved,” Sernau said. He named the issue of large language models running on phones, scalable personal AI, multimodality and others.

Sernau said that while Google’s models are still better quality, “the gap is closing astonishingly quickly. Open-source models are faster, more customizable, more private, and pound-for-pound more capable.” Developers have been accomplishing feats with just $100 and 13-billion parameter open-source models “that we struggle with at $10M and 540B. And they are doing so in weeks, not months.”

Image: SemiAnalysis, Discord

Sernau also worried that users will not pay for a Google product with restrictions on usage if there are free alternatives of the same quality.

This trend should not have been a surprise, he added. It happened in the text-to-image arena when OpenAI’s proprietary DALL-E was overtaken by open-source Stable Diffusion. As such, this is the “Stable Diffusion moment” for large language models, the engineer wrote.

Google’s best hope is to learn from, and collaborate, with external partners and prioritize enabling third-party integrations, he opined.

That is Meta’s generative AI strategy. By releasing LLaMA to academics, any improvements developers make can be incorporated by Meta onto its AI platform.

In a recent earnings call with analysts, CEO Mark Zuckerberg said Meta decided to go the open source route because “unlike some of the other companies in the space, we’re not selling a cloud computing service where we try to keep the different software infrastructure that we’re building proprietary.”

“For us, it’s way better if the industry standardizes on the basic tools that we’re using and therefore we can benefit from the improvements that others make,” Zuckerberg said. Also, “other’s use of those tools can … drive down the costs of those things, which makes our business more efficient too.”

Read more about:

ChatGPT / Generative AIAbout the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)