Global lead for Responsible AI shares lessons from client engagement

Global lead for Responsible AI shares lessons from client engagement

Companies today are much more aware of forthcoming regulations in AI than they were before the 2018 advent of GDPR, Europe’s robust data protection and privacy rules. But they still are far from ready for it, according to a new report by Accenture.

While the majority of companies surveyed are quite cognizant of the importance of using AI responsibly, “most of them are at the point where they have established a set of principles but they have not yet managed to implement it across the enterprise,” said Ray Eitel-Porter, global lead for Responsible AI at Accenture, in an interview with AI Business.

The time to act is now because putting in an effective framework in place across the enterprise could take years, not months, he said.

The good news is that 80% of companies said they have set aside 10% of their AI technology budget towards Responsible AI while 45% have set aside 20%, according to its survey of 850 C-suite executives in 17 geographies across 20 industries. “That’s a very serious commitment,” Eitel-Porter said.

Importantly, companies that already have a risk management structure in place do not have to start from scratch to build Responsible AI, he said.

Setting the ‘bias’ threshold

Responsible AI is the practice of designing and developing AI models that is ethical and supports the principles that an organization and society hold dear. While different entities may have differences in core values, society generally has a "fairly strong" shared view of what is the ethical and human-centered way of using AI, Eitel-Porter said.

Notably, Responsible AI needs involvement across the enterprise. It's not just for technologists. Why? While it is true that data scientists do the heavy lifting of getting training data ready, there are some questions that only the business side can answer, Eitel-Porter explained.

One example is setting the minimum threshold for bias that can be tolerated: Is it 10%? 15%? 20%? Where to set the bias – or error − threshold is a business decision.

The gut reaction from most people would be there should be no bias at all. But this could lead to inaccurate results. “In most instances, there will be a tradeoff between accuracy and bias,” Eitel-Porter said. “If we remove all bias, we will very often have an inaccurate prediction.”

Let’s consider Jill, who has a low credit score because she has had a history of trouble repaying loans or paying on time. She applies for a loan and, all other things being equal, it means there is a higher probability she could renege or be late on payments again. Putting Jill on equal footing with Mary who has a high credit score – zero bias, all other things being equal − could lead to a wrong prediction of whether Jill would repay the loan.

Addressing gaps in the dataset

Once the error threshold is set, the AI model is tested using historical data. A dataset containing all the company customers is segmented into groups and the model is applied. The error rate should be fairly consistent across all groups. If one group is higher than the rest, “mathematically our model is not working as well in this group,” Eitel-Porter said.

But one of the challenges in testing AI models for bias is gaps in the dataset. For example, a bank may want to test whether its AI model discriminates against a certain race. But it does not have race information in its internal database since it did not ask account holders for it. Thus, the bank cannot check for bias using its own dataset.

Eitel-Porter said financial services regulators in the U.K. and academic groups are developing a publicly available dataset that includes so-called “protected” characteristics such as race and gender — and will make it available to companies to test their AI models against. All personally identifiable information (PII) is removed so the data cannot be traced to individuals.

Creating the framework

Many companies can articulate their core principles and list them on their websites, but “it doesn’t mean anyone does anything about them,” Eitel-Porter said. A governance framework ensures that people will respect those principles.

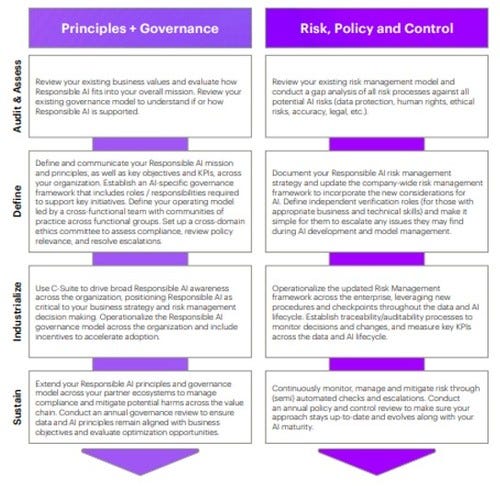

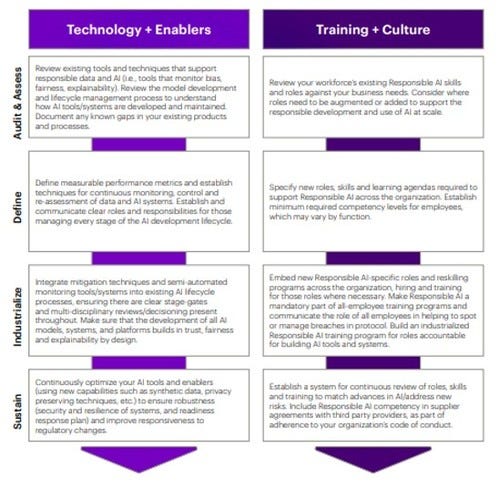

Here is Accenture's framework for developing Responsible AI based on interactions with clients. Eitel-Porter said the horizontal categories at the top are the four pillars that should be the core elements of implementing Responsible AI: Principles and Governance, Risk, Policy and Control, Technology and Enablers, Training and Culture.

Figure 1:

Figure 2:

How to read the charts

Start with Principles and Governance to set out the core beliefs. To make people adhere to these principles, see the second column, Risk, Policy and Control, when determining the checkpoints and to ensure compliance.

To make the implementation successful, read the third column, Technology and Enablers. The company’s data scientists should have experience and training in topics related to Responsible AI such as avoiding bias, Eitel-Porter said. Third party tools also are available from hyperscalers, or data center giants such as AWS and Azure, as well as from proprietary and open sources.

The fourth pillar is Training and Culture in which the company makes sure people across departments understand they are part of the solution, whether they are in customer service, legal, HR or other unit.

The vertical categories – Audit and Assess, Define, Industrialize, Sustain – help companies figure out where they are in the process, what is still needed, how to fill those gaps and how to sustain the system.

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)