Global AI leaders sign yet another open letter warning against the existential risk of AI. This time, China joins them.

At a Glance

- Global AI leaders including CEOs of OpenAI and Google DeepMind sign yet another letter warning of AI's existential threat.

- Around the same time, China's National Security Commission, led by President Xi Jinping, called for improving AI guardrails.

- Notably, Meta leaders did not sign the open letter.

To make sure the public and regulators are aware of the existential dangers posed by AI, global leaders and AI luminaries signed yet another open letter warning of future risks.

Around the same time, China’s Xinhua news agency reported that Chinese President Xi Jinping is calling for an acceleration in the modernization of China’s national security system and capacity. This includes improving guardrails around the use of AI and online data.

China is widely considered the West’s potential AI adversary and competitor. Xi's latest mandates ostensibly is given for its own sovereign security, rather than global safety.

Xi made the remarks at the first meeting of the National Security Commission under the 20th CPC Central Committee. At the meeting, members urged “dedicated efforts” to improve the security governance of internet data and artificial intelligence.

Stay updated. Subscribe to the AI Business newsletter

The mandates come as China’s national security issues are “considerably more complex and much more difficult to be resolved,” the group said. As such, China must be prepared to handle “high winds, choppy waters, and even dangerous storms.”

At the meeting, members called for proactively shaping a “favorable” external security environment for China to “better safeguard its opening up and push for a deep integration of development and security.”

Warning from Silicon Valley

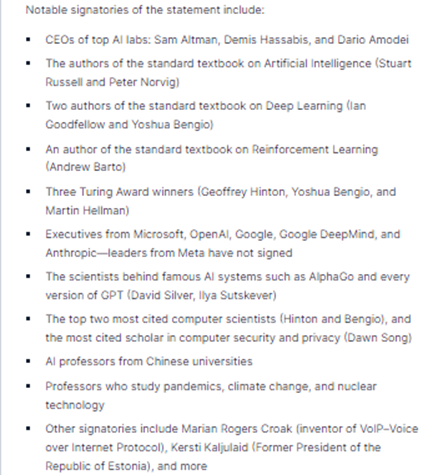

In San Francisco, the nonprofit Center for AI Safety announced on May 30 that a “historic” collection of AI experts signed its open letter, including Turing award winners Geoffrey Hinton and Yoshua Bengio, as well as OpenAI CEO Sam Altman, Google DeepMind CEO Demis Hassabis, Microsoft co-founder Bill Gates and many others.

The letter has one statement: “Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Notably, the nonprofit said Meta leaders did not sign the letter.

In late March, many of the same signatories signed an open letter from the Future of Life Institute warning of similar existential risks posted by advanced AI. Altman himself and other AI leaders have been on a world tour to urge stronger regulation of powerful AI above a certain threshold. And the U.S. has called for the public vetting of advanced AI models from OpenAI, Google, Microsoft and others.

Read more about:

ChatGPT / Generative AIAbout the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)