Bring on the era of the chonky chip

Taiwan Semiconductor Manufacturing Company (TSMC) is reportedly planning commercial production of huge ‘wafer-scale’ processors within two years, following custom work it did for Cerebras Systems.

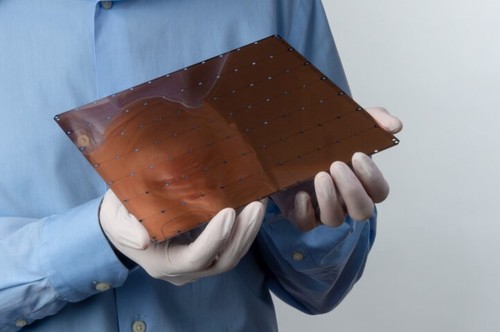

The startup is responsible for the largest chip ever built, with 1.2 trillion transistors and 400,000 AI-optimized cores in a package measuring eight by nine inches.

With each chip custom-made, they currently cost around $2 million apiece.

The largest commercial GPU features 21.1 billion transistors across 1.26 square inches.

Bigger is better

Cerebras' Wafer Scale Engine chip has seen limited success since its launch in 2019, due to the high price and the lack of an ecosystem. But in June, the company announced its first major sale.

The Pittsburgh Supercomputing Center said that it would spend $5m on Neocortex, an AI supercomputer featuring two Cerebras WSE chips and HPE’s shared-memory Superdome Flex servers.

TSMC, apparently, sees a future for the WSE and similar chips, and wants to build out its manufacturing capabilities accordingly.

Taiwan’s DigiTimes reports that the company is planning to improve its Integrated Fan-Out Silicon on Wafer technology in order to build supercomputer-class AI processors.

This technology would not only allow it to build cheaper chips for Cerebras, but other wafer scale startups too – using its 16nm lithography process.

TSMC is the world’s largest third-party semiconductor manufacturer, printing chips for customers including Nvidia, AMD, Apple, Qualcomm, and Xilinx. It also built the majority of Huawei’s chips – before the US stepped in.

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)