The company says this is the first ever deployment of reinforcement learning in a production aerospace system

For years, Google’s sister company Loon has operated a growing fleet of helium-filled balloons that traverse the globe, beaming down Internet connectivity to select regions.

But navigating these balloons, and ensuring they take advantage of the prevailing winds to reach their required location, is an immense task.

The company already shifted management that to an automated system, but this week revealed that it has put self-learning software in charge.

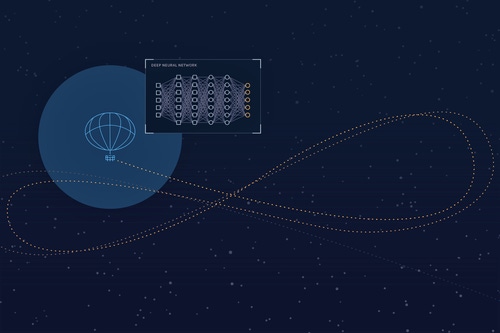

The balloons have no external propulsion, instead inflating an airbag that helps them rise or fall – taking advantage of air currents at different altitudes.

99 Loon baloons

The company clocked up more than one million hours of stratospheric flight back in July 2019, but realized it needed more data to develop a navigation system that uses deep reinforcement learning (LR).

"These days, Loon’s navigation system’s most complex task is solved by an algorithm that is learned by a computer experimenting with balloon navigation in simulation," Loon CTO Salvatore Candido explained in a blog post.

Loon believes that this is the world’s first deployment of reinforcement learning in a production aerospace system, built out of a collaboration with Google AI.

"To prove out the viability of RL for navigating stratospheric balloons, our first goal was to show we could machine learn a drop-in replacement for our then-current navigation controllers," Candido said. "To be frank, we wanted to confirm that by using RL a machine could build a navigation system equal to what we ourselves had built."

The company simulated tens of millions of hours of flight to train the system. This approach is popular in RL systems, but can cause issues when transitioning from simulation to the real system due to any discrepancies between simulation and the real world.

To see if issues would arise, Loon flew a balloon with the machine learned controller back in July 2019, pitting it against a nearby balloon with the older generation navigation system using an algorithm called StationSeeker.

"In some sense it was the machine – which spent a few weeks building its controller – against me – who, along with many others, had spent many years carefully fine-tuning our conventional controller based on a decade of experience working with Loon balloons," Candido said.

The RL system outperformed the conventional one, staying closer to the region it was told to cover. It also had a smoother flight path, Candido explained. "We were amazed by some of the elegant behaviors we observed from this early RL test. Seeing the system had learned to smoothly tack through a highly localized wind pattern made it clear to us that the RL worked."

Another test at a greater scale, with an updated version, proved even more successful. "Overall, the RL system kept balloons in range of the desired location more often while using less power," Candido said.

In a research paper in Nature, the team explained that they tried to optimize time within a radius of 50km (TWR50), with an eye to beating Station Seeker's average 40.5 percent TWR50. "A one percent gain corresponds to 14.4 additional minutes of station-keeping in a 24-hr period," the paper stated.

The RL controller managed 55.1 percent, "so the difference amounts to a substantial 3.5hr per day average improvement in time spent near the station."

Next came the full deployment. "The key difference in approach is that instead of engineers building a specific navigation machine that is really good at steering balloons through the stratosphere, we’re instead (with RL) building the machine that can leverage our computational resources to build the navigation machines that were originally designed by engineers like me," Candido said.

With Loon facing intense competition from other approaches to providing Internet to remote places, from drones to the Low Earth Orbit satellite constellations planned by SpaceX and Amazon, it is not clear how useful Loon will prove.

However, in their paper, the researchers argue that the capability marks a more profound shift in autonomous control: “In designing intelligent agents that carry out tasks autonomously in the real world, we encounter the issue of domain boundaries – for instance, what a plate-grasping robot does once its dishwasher is empty.

“Currently, in between bouts of cognition, scripted behaviors and engineers take over from the agent to reset the system to its initial configuration, but this reliance on external agency limits the agent’s autonomy. By contrast, station-keeping offers an example of a fundamentally continual and dynamic activity, one in which ongoing intelligent behavior is a consequence of interacting with a chaotic outside world,” they state.

“By reacting to its environment instead of imposing a model upon it, the reinforcement-learning controller gains a flexibility that enables it to continue to perform well over time. In our pursuit of autonomous intelligence, we may do well to pay attention to emergent properties of these and other agent–environment interactions.”

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)