And other news from Google I/O 2021

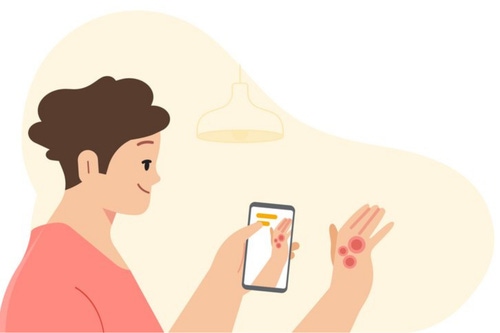

Google has developed an AI-based tool that can assist users in identifying dermatological issues and answering questions about their skin, hair, and nails.

Revealed at the Google I/O conference, the yet-unnamed tool relies on mobile device cameras and a web-based application to identify aliments and provide information on suggested conditions.

“Each year we see almost ten billion Google Searches related to skin, nail, and hair issues. Two billion people worldwide suffer from dermatologic issues, but there's a global shortage of specialists,” Google Health product manager Peggy Bui, and technical lead Yuan Liu, said in a blog post.

“While many people’s first step involves going to a Google Search bar, it can be difficult to describe what you’re seeing on your skin through words alone.”

Google said it hopes to launch the tool as a pilot in Europe “later this year.” The disclaimer accompanying the blog post states that it “has not been evaluated by the U.S. FDA for safety or efficacy” and is thus “not available in the United States.”

It’s not a tumor

The dermatology assist tool requires a user to take three images of the skin, hair, or nail concern from different angles. Users are then asked about their skin type, how long they’ve had the issue, and other questions that help narrow down the possibilities.

Google’s machine learning model analyzes the information and draws on its knowledge of 288 skin conditions to give users a list of possible matches that they can then research further.

For each matching condition, the tool will show users dermatologist-reviewed information and answers to commonly asked questions, along with similar matching images from the web.

Liu and Bui stressed that the tool is not intended to provide a diagnosis nor be a substitute for medical advice “as many conditions require clinician review, in-person examination, or additional testing like a biopsy.”

Instead, they said the tool aims to provide users with access to authoritative information so a more informed decision about their next steps can be taken.

The tool itself has been developed over three years of machine learning research and product development. The pair said that several peer-reviewed papers that validate the company’s AI model have already been published, and more “are in the works.”

One of their papers, which featured in Nature Medicine, debuted the deep learning approach to assessing skin diseases and suggested that an AI-based system can achieve accuracy that is on par with US board-certified dermatologists.

Bui and Liu’s most recent paper details how non-specialist doctors can use AI-based tools to improve their ability to interpret skin conditions.

"To make sure we're building for everyone, our model accounts for factors like age, sex, race, and skin types — from pale skin that does not tan to brown skin that rarely burns," the authors said.

"We developed and fine-tuned our model with de-identified data encompassing around 65,000 images and case data of diagnosed skin conditions, millions of curated skin concern images, and thousands of examples of healthy skin — all across different demographics."

The AI model behind the tool has passed clinical validation, and received CE mark as a Class I medical device in the EU. It has not been evaluated by the US Food and Drug Administration (FDA).

From Pluto to paper airplanes

Google’s I/O event had other notable announcements around AI, including a new language model called LaMDA which was shown having ‘conversations’ with the planet Pluto and a paper airplane.

Google CEO Sundar Pichai unveiled the language model in a pre-recorded demo.

During the demo, LaMDA (Language Model for Dialogue Applications) assumed roles of the planet Pluto and a paper airplane, and responded to questions as those objects would.

When asked ‘what else do you wish people know about you,’ LaMDA posing as Pluto responded, “I wish people know that I am not just a random ice ball. I am actually a beautiful planet.”

A blog post authored by Eli Collins, product management VP at Google, and Zoubin Ghahramani, its senior research director, said that the language model will eventually be used across Google products, including the search engine, Google Assistant, and Workspace platform.

The pair said that LaMDA was trained on dialogue and that it picked up on the nuances that distinguished open-ended conversation from other forms of language.

“LaMDA can engage in a free-flowing way about a seemingly endless number of topics, an ability we think could unlock more natural ways of interacting with technology and entirely new categories of helpful applications,” the pair wrote.

‘It’s as if they’re in front of me’

Google also unveiled a video chat booth that can create a three-dimensional representation of the person you're talking with.

Dubbed Project Starline, the platform relies on multiple cameras and sensors to capture a person’s appearance and shape, and uses those images to create a 3D model that's broadcast in real-time.

A preview shown at the event sees Starline used for person-to-person calls, with both sides using the platform. Group calls were not shown.

Google VP Clay Bavor said the company has been working on Starline for a few years, looking to advance communication tools.

“Project Starline is currently available in just a few of our offices and it relies on custom-built hardware and highly specialized equipment. We believe this is where person-to-person communication technology can and should go, and in time, our goal is to make this technology more affordable and accessible, including bringing some of these technical advancements into our suite of communication products,” Bavor said.

MUMs the word

Another AI innovation unveiled at the I/O event was MUM (Multitask Unified Model), a new type of deep learning model that is supposedly 1,000 times more powerful than Google’s BERT (Bidirectional Encoder Representations from Transformers).

“Today's search engines aren't quite sophisticated enough to answer the way an expert would. But with MUM, we're getting closer to helping you with these types of complex needs. So in the future, you’ll need fewer searches to get things done,” Pandu Nayak, the company’s search vice president and Google Fellow, said.

“MUM has the potential to transform how Google helps you with complex tasks. Like BERT, MUM is built on a Transformer architecture, but it's 1,000 times more powerful. MUM not only understands language but also generates it.

“It’s trained across 75 different languages and many different tasks at once, allowing it to develop a more comprehensive understanding of information and world knowledge than previous models. And MUM is multimodal, so it understands information across text and images and, in the future, can expand to more modalities like video and audio,” Nayak added.

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)