Augmented Reality Microscope Enables More Accurate Tumor Diagnoses

U.S. Department of Defense partners with Google and Jenoptik to develop the Augmented Reality Microscope.

At a Glance

- The U.S. Department of Defense teamed up with Google and Jenoptik to develop the Augmented Reality Microscope (ARM).

- ARM helps pathologists more accurately and quickly diagnose cancerous tumors.

- The DoD is spending $1.7 billion a year on cancer-related treatment in military health care.

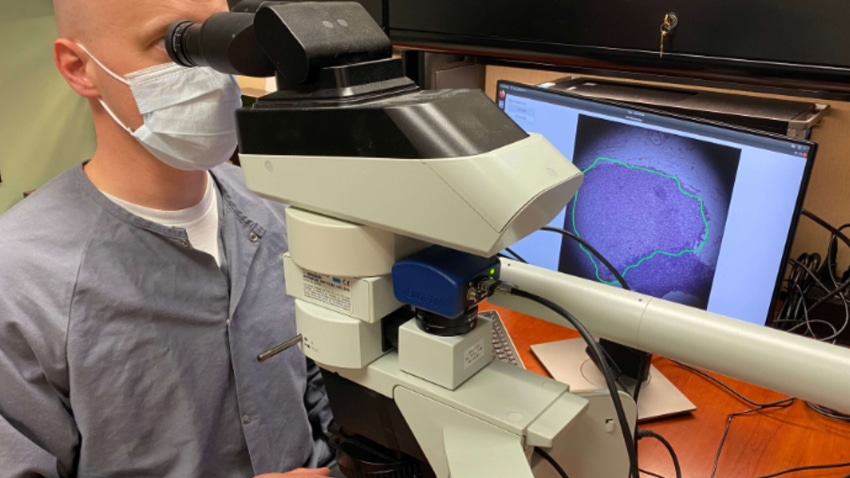

The U.S. Department of Defense (DoD) has teamed up with Google to build an AI-powered microscope, called the Augmented Reality Microscope (ARM), that can help pathologists better diagnose tumors. The tool is especially useful when clinicians would like another opinion if they are unable to access other resources or need a third opinion when doctors disagree on a diagnosis.

They selected Google to develop the software and Jenoptik, an optical technology company, to handle the hardware. There are 13 ARMs being tested with a variety of users and cases, along with feedback from a diverse set of pathologists, to help refine the AI models.

The DoD spearheaded the venture under the Defense Innovation Unit's (DIU) Predictive Health program, which aims to use AI to improve military health care. Around $1.7 billion of the DoD's annual budget is spent on cancer-related treatment. At present, pathologists make the final determination whether a tumor is cancerous after looking at a biopsy sample through a microscope. But this approach is time-consuming and open to errors. Moreover, the number of medical specialists that can perform this diagnosis is declining.

The AI-enabled microscope has the potential to increase accuracy and efficiency of the diagnosis, according to the DoD. Studies have shown that pathologists using AI and machine learning are able to more accurately and quickly identify cancerous nodes.

"Doctors have used microscopes for over 100 years. Along with anesthetics and antibiotics, microscopes are one of the three great innovations that transformed medicine to a modern, safe, scientific profession,” said Dr. Niels Olson, chief medical officer at DIU, in a blog. “Full digitization of microscopy has lagged due to the enormous amount of data. Instead, the ARM is the self-driving car of microscopes: it doesn't need to push all the data to the cloud. It can make inferences in real time, in the doctor's lab, even offline, taking the technology to the next level."

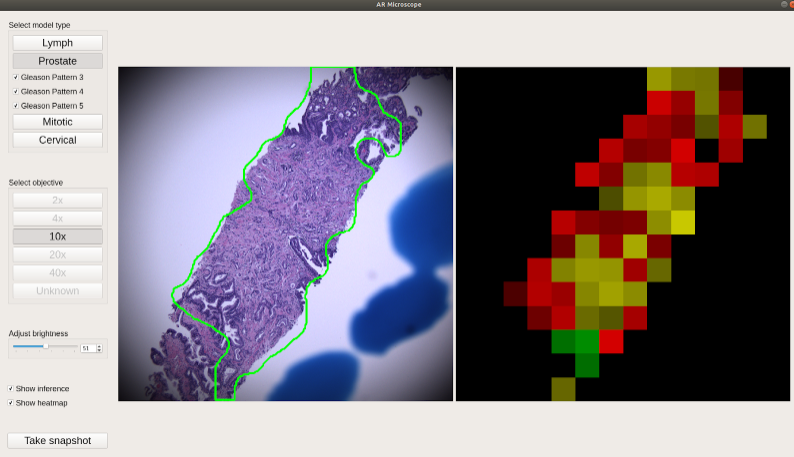

The AI models can outline where the cancer is detected in the glass slides, overlaying its analysis right onto the microscope’s field of view, to keep it clear for the pathologists. The AI models can help evaluate the tumor's aggressiveness. A monitor shows a heat map indicating the tumor’s boundary in pixelated form. Pathologists can take screen grabs of slides, which consume less storage space and are cheaper than traditional image storage.

ARM monitor display. Left image shows inference highlighting on a suspected cancer on a tissue sample. Right image is a heat map of the same tissue sample for additional cueing.

“Google Public Sector is proud to help DIU leverage AI to advance early cancer detection,” said Aashima Gupta, global director of Healthcare Strategy and Solutions, Google Cloud, in a statement. “Our collaboration with DIU gives pathologists an AI assistant that helps them deliver more accurate and timely cancer diagnosis, transforming the health care experience for the military community and beyond.”

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)