October 25, 2017

Ever been asked if you're a robot? Most people have been, thanks to Google's reCAPTCHA API, which is used across the web to verify whether site visitors are bots or humans. What many people aren't aware of, however, is that each time they complete one of these security checks, they are unwittingly training Google's machine learning datasets.

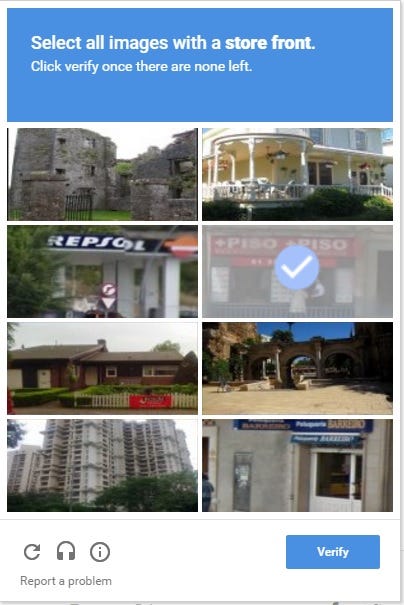

"Select all images with a orange"

reCAPTCHA is an extremely effective security API for sites to install, easily protecting them from spam. CAPTCHAs have come a long way since the days when we had to type up whichever distorted text was in the box. The introduction of noCAPTCHA earlier this year seemed to have finally rid us of this often irritating - yet necessary - hurdle to site registration. noCAPTCHA works by tracking user behaviour across a site - factors such as mouse movements and navigation speed - to predict whether or not you might be an automated bot.

If the test is unclear, the user will be fed the image-based reCAPTCHA you might be familiar with, such as "click all images with a 'bridge'". Upon doing so, you will be granted entry to the site. However, in doing so, you are actually labelling images on Google's behalf to improve their machine vision algorithms.

"reCAPTCHA is a free service that uses an advanced risk analysis engine to protect your app from spam and other abusive actions. If the service suspects that the user interacting with your app might be a bot instead of a human, it serves a CAPTCHA that a human must solve before your app can continue executing," the company explain on the reCAPTCHA site.

Streamlining Training Data with reCAPTCHA

How is this possible? Well, machine vision traditionally relies upon - among other things - carefully labelled training datasets. You feed a neural network 100,000 photos of lions and 100,000 photos of other things until the algorithm learns for itself what a lion actually is. Think ImageNet: a 12-year long image database project where volunteers were asked to label over 14 million images with nouns.

The reCAPTCHA system enables Google to do this en masse every single time an Internet user encounters it. When you have to label all the photos that show a bridge, or a lion, or a street sign, you're training Google AI to recognise bridges, lions, and street signs.

Google are quite transparent about this fact, even admitting on the reCAPTCHA website that "Every time our CAPTCHAs are solved, that human effort helps digitize text, annotate images, and build machine learning datasets. This in turn helps preserve books, improve maps, and solve hard AI problems." This is also present in the terms of service for the API, which developers agree to when they implement it on their own platforms:

"reCAPTCHA Terms of Service: You acknowledge and understand that the reCAPTCHA API works by collecting hardware and software information, such as device and application data and the results of integrity checks, and sending that data to Google for analysis. Pursuant to Section 3(d) of the Google APIs Terms of Service, you agree that if you use the APIs that it is your responsibility to provide any necessary notices or consents for the collection and sharing of this data with Google."

Whether the users of the API are fulfilling this responsibility is another question, but with the formation of a new DeepMind ethics research group, the firm are no doubt aware of the ethical implications of AI. Either way, the tech giant's novel way of using what is ostensibly a security measure in order to fulfil the arduous manual task of developing better AI is nothing short of genius - if not exceptionally ironic.

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)