Enough is Enough: Images of AI Are Too Cliche

This nonprofit says such stereotypes are harmful

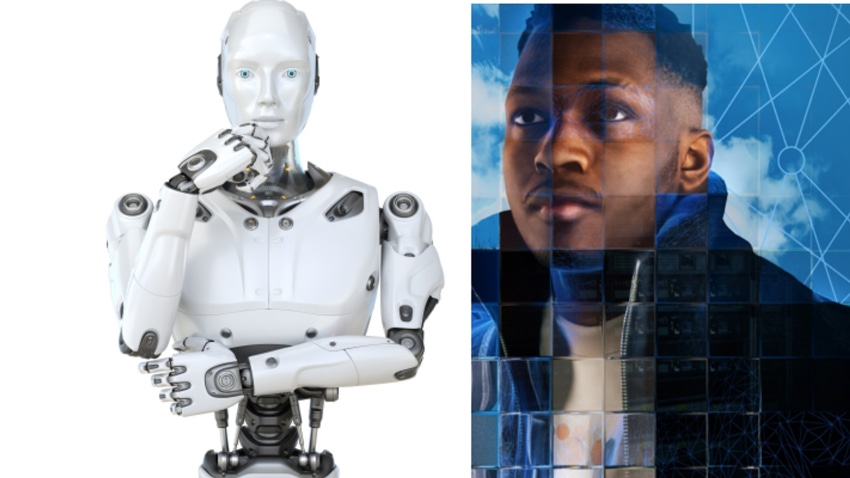

Cliché images of AI are all over the internet – whether a generic picture of a robot with white plastic skin or a Terminator-esque cyborg out to kill innocent civilians.

To many, these images are part and parcel for a technology that, while decades old, is just emerging onto the mainstream consciousness and struggles to be accurately depicted by media that do not understand it.

But one group has had enough. Better Images of AI is a nonprofit group led by We and AI CEO Tania Duarte. The group warns that without wider public comprehension of AI, mistrust in technology will grow and groups will continue to be underrepresented.

This week, Better Images of AI launched a report that acts as a guide for creators on how to better depict AI in their content. It was unveiled at an event at the Alan Turing Institute in London, which has been supporting the work, alongside the BBC R&D team.

“The idea of diversifying is really important to us as an institute because it doesn't help us or anybody to understand the science if the visuals actually detract from the complexity of the issues that are faced,” said Mark Burey, head of communications and marketing at the Alan Turing institute.

The group’s image library is carefully curated and can be downloaded and used by anyone for free if credited using the Creative Commons license. Along with AI Business, the nonprofit’s images have been used in The Washington Post, the London School of Economics and Time Magazine, among others.

.png?width=700&auto=webp&quality=80&disable=upscale)

Kanta Dihal showcasing the report at an event at the Alan Turing Institute, London

The report covers research by Kanta Dihal, a senior research fellow at the University of Cambridge’s Leverhulme Centre for the Future of Intelligence, as well as findings from a series of roundtables and workshops in which stakeholders and creatives from across AI came together to identify what constitutes a good or bad image of AI.

And the spike in AI interest stemming from ChatGPT means the public need to be able to understand what is being shown in the media, Duarte said.

Dihal also authored a paper titled "The Whiteness of AI," in which she outlines that AI is often portrayed as white humanoid robots. At the launch of the Images report, Dihal said these depictions bring other associated ideas that AI is built by and for white people, as well as more intelligent.

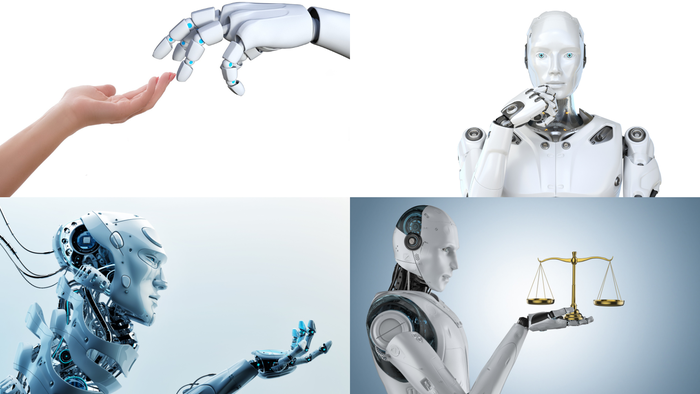

The nonprofit also analyzed “the most common, unhelpful image tropes,” she said. Dihal warned of an increase in outlets using images of robots holding things like gavels and scales when referencing instances of increased automation in court and legal settings.

What constitutes a bad image of AI?

There are several parameters that can determine whether an image is unhelpful in its depiction of AI. According to the nonprofit, tropes to avoid include references to science fiction and white robots.

Also what to avoid are variations on Michelangelo’s religious fresco, The Creation of Man, anthropomorphized AI and human brain outlines.

Tropes to avoid, according to Better Images of AI

The newly published report found that creators find it difficult to source decent images of AI and that deadlines, particularly in media and news settings, lead to quick decisions being made regarding image use.

The report also states that some of these unhelpful stock images have become so recognizable as “a shorthand for AI, especially for non-technical audiences.”

How to pick better images of AI

The Better Images of AI team point to four tenets for picking a better image of AI: honesty, humanity, necessity and specificity. Images need to represent the tech as accurately as possible showing what it can do, without extrapolating an unlikely future.

Any images including people should be inclusive of the groups involved in the development and deployment of AI, from software engineers all the way to end users. AI can encompass many things, but oftentimes content is accompanied by images of robots where none are involved.

The group’s better AI image library contains pictures of hardware, autonomous driving, human-AI collaboration and computer vision, but it wants to build out the image library in the near future.

“We'd like to have more topics, things like AI in engineering or AI in space – lots more nuanced choices within those topics. And we need them to be used by more people and organizations.”

What about AI-generated images?

But could text-to-image tools like Midjourney and DALL-E help the Better Images for AI team build out its library more?

Duarte told AI Business that they do have some images in the library that use AI. She expressed hesitancy but was not against the idea entirely.

“This has been months of discussion. Some of our success may come from people looking for images generated by AI. We are very conscious that this project is about showcasing the value of creativity and creative people. In our workshops, we have had people who are able to think about new metaphors, new concepts, new ways of expression, with input from technology,” Duarte said.

“There's no one view but it’s amazing that you can test new concepts by giving a prompt to an image generator to help you come up with new ideas. I would only hope that we can get to a stage where artists can be credited in the datasets that image generators are trained on," she added.

Dihal said that currently generated images of AI just regurgitate existing tropes, and that the need is to change them, not reproduce them.

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)