Intel, HPE to Develop Generative AI Models

The AI models will have up to a trillion parameters and will run on the Aurora supercomputer

At a Glance

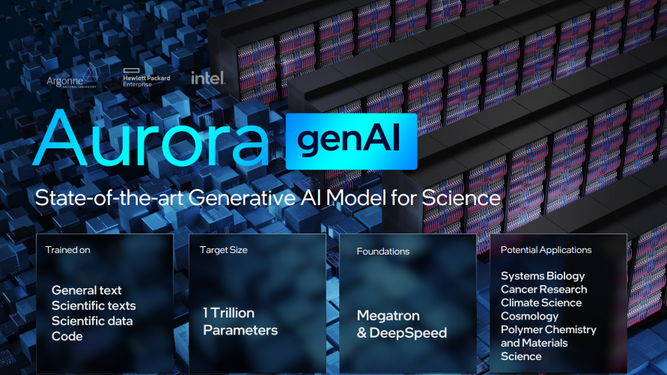

- Intel, HPE, Argonne National Laboratory and the U.S. Department of Energy will develop generative AI models for science.

- They will use the Aurora supercomputer, designed by Intel and Cray, to run the models.

- Aurora will have 2 exaflops of compute power once it goes online later this year.

Chipmaker Intel is partnering with HPE, Argonne National Laboratory and others to develop a series of generative AI models for scientific research.

Announced at the ISC High Performance Conference in Germany, they plan to use the Aurora exascale supercomputer for the project once it goes online this year. The AI models will have as many as one trillion parameters.

Stay updated. Subscribe to the AI Business newsletter

The generative AI models will be trained on general text, code, scientific texts and structured scientific data spanning disciplines such as biology, chemistry, materials science, physics, medicine and other sources.

The models will be used for varied scientific applications, such as design of molecules and materials, synthesizing of knowledge across millions of sources to yield new experiments in systems biology as well as polymer chemistry, climate science and cosmology.

Another use case is to speed up the identification of biological processes related to cancer and other diseases.

Large language models will need heavy duty compute power and Intel said Aurora will offer more than 2 exaflops of compute performance. It houses nearly 64,000 GPUs and 21,250 CPUs as well as more than 1,000 DAOS storage nodes.

Aside from Intel, HPE and Argonne, other collaborators are the U.S. Department of Energy laboratories, universities around the world, nonprofits and other international partners.

Read more about:

ChatGPT / Generative AIAbout the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)