Makes sense when you’re planning to buy Arm

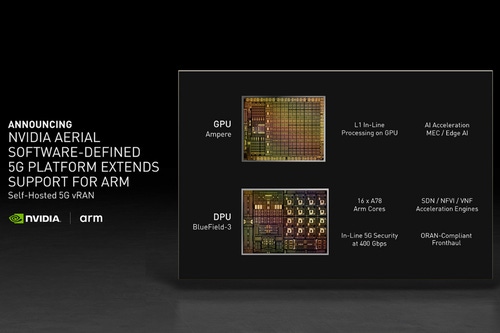

Nvidia has announced that its Aerial framework for GPU-Accelerated 5G Virtual Radio Access Networks (vRAN) now supports Arm-based CPUs.

The upcoming BlueField-3 A100 hardware – expected to ship in the first half of 2022 – will feature Arm cores, and offer compatibility with both x86 and Arm-based processors.

“We’re bringing together two worlds — AI of computing and 5G of telecommunications — to create a software-defined platform for AI on 5G,” said Ronnie Vasishta, Nvidia’s senior vice president of telecoms.

When two become one

Nvidia is, of course, set to acquire Arm for $40 billion – although still waiting for that deal to go through. The pair are already planning on establishing an AI laboratory at the Arm headquarters in Cambridge, seen as part of the takeover strategy.

In early June, Nvidia teased that serves with Arm-based CPUs would be certified to run its AI Enterprise software in 2022.

With the latest move, the company enables original equipment manufacturers to ship Arm-based CPUs and Nvidia AI Enterprise software with Aerial 5G.

Such systems promise a simplified method to build and deploy self-hosted vRAN that converges AI and 5G capabilities, Nvidia said.

The AI-on-5G computing platform will incorporate 16 Arm Cortex-A78 processors into the Nvidia BlueField-3 A100.

“This would result in a self-contained, converged card that delivers enterprise edge AI applications over cloud-native 5G vRAN with improved performance per watt and faster time to deployment,” Nvidia said.

BlueField-3 is optimized for 5G connectivity, multi-tenant, and cloud-native environments – as well as hardware-accelerated networking, and management services at the edge.

Upgrades to HGX and new supercomputer

Nvidia also announced an upgrade to its HGX hardware platform set to "turbocharge" high-performance computing.

The latest A100 80GB GPUs, NDR 400G InfiniBand networking, and Magnum IO GPUDirect Storage software have all been added.

The A100 GPUs with 80GB of HBM2e high-bandwidth memory increase memory bandwidth by 25 percent compared to the A100 40GB – allowing for more data and larger neural networks to be held in memory, minimizing internode communication and energy consumption.

The 2,048-port switch provided by the 400G InfiniBand improves scalability as much as six times compared to previous generations, Nvidia said. It would allow systems to connect more than a million nodes with just three hops using a DragonFly+ network topology.

Magnum IO GPUDirect enables direct memory access between GPU memory and storage. Having a direct path would enable applications to benefit from lower I/O latency and use the full bandwidth of the network adapters while decreasing the utilization load on the CPU, Nvidia said.

Dell Technologies, Hewlett Packard Enterprise, Lenovo, and Microsoft Azure are among the firms already using the HGX platform. Next, the same hardware is set to power Tursa, the upcoming supercomputer housed by the University of Edinburgh.

Tursa is the third of four DiRAC (Distributed Research utilising Advanced Computing) next-generation supercomputers to use the HGX platform, with the final machine set to feature Nvidia’s InfiniBand networking.

“Tursa is designed to tackle unique research challenges to unlock new possibilities for scientific modeling and simulation,” said Luigi Del Debbio, professor of theoretical physics at the University of Edinburgh and project lead for the DiRAC-3 deployment.

The new supercomputer, built with Atos, is expected to go into operation later this year. It will feature 448 Nvidia A100 Tensor Core GPUs and will include four Nvidia HDR 200Gb/s InfiniBand networking adapters per node.

The system will be run by DiRAC — the UK’s integrated supercomputing facility for theoretical modeling, which has existing deployments at the University of Cambridge, Durham University, and the University of Leicester.

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)