AI tech is helping advance nuclear fusion – and is being pitched as the answer to the problems facing ITER, the world's largest magnetic confinement plasma physics experiment

July 3, 2020

AI tech is being pitched as the answer to the problems facing the world's largest magnetic confinement plasma physics experiment

For fifty years now, nuclear fusion has been held up as a potential source of cheap, environmentally friendly energy. The process takes place in the plasma of the sun, where, in an ocean of high-pressure ionized gas, light elements combine into heavier ones and release the energy which fuels our solar system.

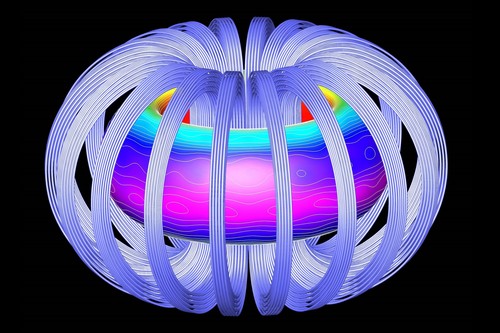

It’s not easy to replicate this on earth. By the 1950s, plasma physicists had settled on the tokamak, a doughnut-shaped ring of plasma contained in a magnetic field, but so far they have not managed to contain one long enough, or at a high enough temperature, to generate useful energy.

For more than 50 years, fusion has been the energy of the future, and scientists joke that “it always will be!”

But there are signs that things may be speeding up – and if commercial fusion ever takes off, AI will play a crucial role. Machine learning is helping accelerate the research and, if we ever have fusion power plants, every single one of them will be controlled by embedded AI systems.

Making doughnuts

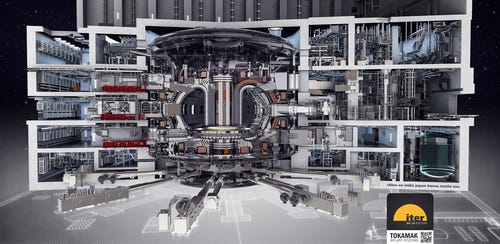

Government-backed fusion research is moving towards a grand goal, a colossal project called ITER, due to go live in the south of France in a few years. Meanwhile, startups believe they have an alternative route to get there quicker: both are using AI.

Fusion researchers are focused on Q, the ratio of energy input to output. A Q of 1.0 means breaking even, but a useful generator has to go way beyond that. So far, the record for a fusion reaction is a Q of 0.67, achieved by JET, the Joint European Torus in the UK, operating since the 1990s.

JET is the precursor to ITER (the International Thermonuclear Experimental Reactor) - a mega-project backed by the EU, Russia, China, Japan and South Korea, which promises to deliver a Q beyond 1.0, at a cost of more than $25 billion.

“Right now we are right there, at the break even point,” William Tang, principal research physicist at the Princeton Plasma Physics Laboratory, told AI Business. “To make progress, you need a larger device. You had to move from pulse tokamaks to long-pulse systems built using superconducting materials.”

Even if ITER gets Q to 1.0, it will only be the start of the path to a self-sustaining, controlled fusion reaction, Tang said: “A good analogy is the Wright brothers. At Kitty Hawk [where they flew a heavier-than-air craft in 1903], they would have thought you were crazy to think this would be competitive with other means of transportation.”

The problem is that plasmas fluctuate. Their behavior is a complex mix of fluid dynamics and electromagnetism, which is hard to predict and control. “Disruptions occur very quickly, with a big release of energy which will damage the confining vessel, and keep you from achieving your goal.”

A few years back, professor Tang saw the possibility that AI could generate predictions of disruptions – and powered by fast enough supercomputers, it could do so quickly enough to eventually control a tokamak.

He gathered young specialists, and in 2019, his team got a letter published in Nature: Predicting disruptive instabilities in controlled fusion plasmas through deep learning emerged from the PhD work of Julian Kates-Harbeck, with help from Tang and Alexey Svyatkovskiy.

The paper does what the name suggests. It explains how a neural network was taught to spot the signs of an impending disturbance, in time to head it off. “Advances in AI methodologies are testable and have given us a lot of hope,” Tang said. “We were very fortunate – we made these advances and validated them against a lot of observations.”

There is a huge amount of experimental data: test runs or “shots” recorded at JET and other tokamaks such as General Atomic’s DIII-D, which has been running in San Diego since the 1980s. Kates-Harbeck and the team used some of these shots to train a recurrent convolutional neural network which takes a vast amount of inputs.

This architecture is highly parallel, and speeds up well on GPU-based supercomputers. Tang’s connections with the high performance computing (HPC) community got the team time on machines at the Oak Ridge National Laboratory (whose 200-petaFLOPs Summit only just lost the title of the world’s fastest supercomputer in June 2020). In an aside, he told us he’s hoping for some time on Aurora – an exaflop supercomputer due to launch next year.

Trained on a set of shots from JET, the Fusion Research Neural Network (FRNN) was applied to other shots, and could predict impending disruptions with 90 percent accuracy, in timescales of milliseconds. On a live system, that would be quick enough to take preventive measures and save the shot. This was completely beyond the ability of systems trying to calculate disruptions from raw sensor readings.

Self-driving tokamak

The team is taking steps towards actually using FRNN to control a live plasma: “We’ve been able to get this piece of software to speak the language of the older [DIII-D] control system and exercise predictions in a millisecond timescale. There are many, many actuators in the tokamak, controlling temperature, heat and other things. If you can make predictions quickly enough for the actuators to be engaged, it is very exciting,” Tang said.

FRNN made another big advance: it learned transferable knowledge. Trained on data from DIII-D, FRNN could predict disturbances in shots that ran later on JET – a completely different torus. And it could use learning from low-energy JET shots to predict issues with later shots, which took JET closer to fusion.

By the time ITER is switched on, FRNN could have a system on board which has a good idea of how to keep it stable, so the ITER scientists will be able to get up to actual fusion experiments more quickly. Tang is working on a program of experiments and demonstrations to get FRNN or its descendants into the ITER system.

“Demonstrating it on a real live tokamak [the DIII-D system] in real time – that will persuade them that adopting this type of capability is in their best interests,” he said. “Of course, we could fall flat on our faces – but this could be a tool to get ITER up and running sooner than it might.”

A drawing of the ITER tokamak and integrated plant systems now under construction in France. © oakridgelabnews

Beyond ITER, future fusion experiments and eventual reactors will need systems like FRNN for autonomous control to keep them stable without the need for human intervention: the “self-driving tokamak” will be essential for safety and stability.

Developing the system further should be relatively simple. When he asks for supercomputing resources, the professor is pushing at an open door, because fusion research is popular and exciting: “We have good exemplar algorithms, which demonstrate the power of these systems in viable applications. That’s a very nice benchmark, and FRNN is very much desired. We are on the top supercomputers in Japan and the US right now.”

There’s one potential problem: the supply of human brains. Kates-Harbeck and Svyatkovskiy, the AI experts on the FRNN paper, have moved on to startups, like many of the world’s best minds. But Tang says they’re still in touch: “They’ve stayed engaged with us and they’re happy about the progress of their baby.”

And as for the future, nuclear fusion is attractive enough to get many more youngsters involved: “There are other super-bright post-docs coming in. This is just a lot of stimulation and fun!”

About the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)