Beyond Robo-writers: How AI is Shaping the Future of News

News in the age of generative AI: The promise and perils of automated journalism

At a Glance

- Can AI be an 'alpine walking stick' for news? Augmenting, not replacing journalists?

Part one of a series on AI in journalism

Automated journalism has been around for the better part of a decade – but was limited to larger corporate newsrooms like The Associated Press and Bloomberg.

Mario Haim & Andreas Graefe conducted studies in 2017 that found that the quality of automated news is “competitive with that of human journalists for routine tasks.”

The advent of tools like ChatGPT have made augmented news production more accessible to newsrooms all over the globe.

“Anyone can use it, if you’re the intern or the CEO, anyone can put a prompt in,” said Charlie Beckett, professor of practice at the London School of Economics’ department of media and communications.

Beckett, who leads the Polis JournalismAI project, likened the user experience (UX) of a tool like ChatGPT to when Google first launched search − “a master stroke” he called it.

“We’re all going to be using this in the same way that we all use smartphones, search, and social media. This is going to become part of the fabric of our lives.”

An 'alpine walking stick' for news: AI as an augmenting aid

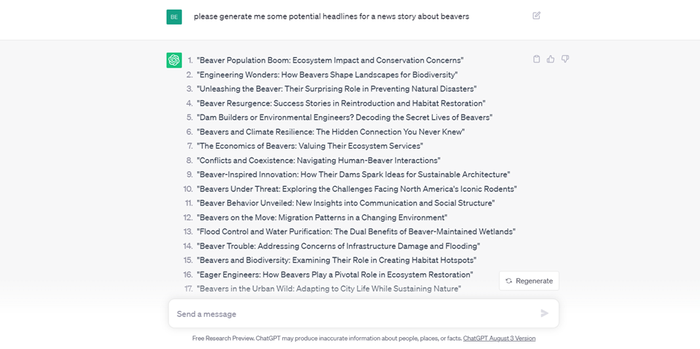

There are several use cases for journalistics to apply AI. For example, headline generation: Simply type in a text box what you want, like ‘please generate me some potential headlines for a news story about beavers,’ and you’ll receive what you asked for.

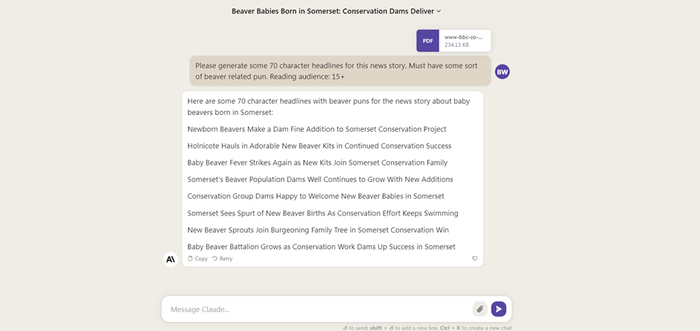

Using a more refined prompt - or a feature like Custom Instructions - chatbot users can refine AI-generated outputs to fit a certain newsroom’s style. Claude from Anthropic, while in beta, allows users to upload lengthy documents, which could be used to evaluate news content.

Instead of merely asking for a headline on beavers, for example, journalists could use the above to finally craft the perfect headline.

A use case like headline generation shows what was once a complicated concept is now far simpler.

The LSE professor said AI’s application in journalism spans “every little bit” of the news production process - editing video, building web products and infilling spaces in design.

Beckett described the uses of AI in journalism as “mundane, supplementary, but creative,” adding: “AI can support any kind of process where there's data involved, and you're trying to manipulate data or language.”

“The journalism industry is so siloed - the graphics department, the archive department, the IT department, the video department, the audio department, the newsgathering, the news distribution. This technology allows you to cross these boundaries and suddenly job demarcation could be eroded by this.”

Journalists are making use of AI tools like Grammarly to check their work for mistakes and Otter.ai to transcribe audio for them. Simple uses, but ways in which content can be created not only faster, but smarter.

Channeling Turing award winner Yann LeCun and his comments that AI is more akin to a typing aid, Beckett said: “If [AI] is something that can make you type more intelligently, it’s a bit like alpine walking sticks in that if it makes you go 10% faster, then that’s amazing.”

Automation vs. augmentation: Cautionary tales of automated content

While it could be used to improve ideas for headlines or generate potential interview talking points, one thing AI cannot do at present is generate opinionated news.

Beckett pointed to the case of the Limerick Leader, a local news outlet in Ireland that used ChatGPT to generate an opinion piece about immigration.

With a headline that reads: ‘Should refugees in Ireland go home?’ The OpenAI chatbot was used to generate an opinion-based article on a sensitive subject. The resulting article was later reworked and labeled as an ‘experiment’ - with the headline now reading: ‘Can we trust Artificial Intelligence?’ (The original version is still visible via the Wayback Machine.)

The article caused an uproar, with the National Union of Journalists (NUJ) suggesting it was of “grave concern.”

Séamus Dooley, the NUJ Irish Secretary, said: “While the article seems relatively benign, the question is loaded and is a classic trope. A journalist writing such a story would examine the local and national context, talk to relevant agencies and NGOs and perhaps discuss personal stories. The article largely ignores the human dimension, the pain and suffering of those forced to flee persecution or human rights abuses, the complex reasons why people seek asylum and the reasons why refugees may not be in a position to ‘go home.’”

Beckett said the article represents the "worst possible use case" for AI in journalism and represents a case of a news outlet harming its reputation by using AI.

A similar instance befell CNET, the technology news outlet, that was caught using AI to write stories. Examples of news outlets like CNET and the Limerick Leader using AI to produce news and articles at scale was a use case Beckett could not get behind.

“It's not about automating content all the time. Because who wants automated content?” he said. “I do if it's simple stuff, but I want the good stuff. Doesn't matter if you're writing for The Sun, or The Sunday Times, people want stuff that's got an extra added human value. It's funny, it's exciting. It's investigative, it's moral. It's got judgment. It's got personality. Those are the things that stand out for people. And the AI is not very good at that.”

AI does have its uses in the newsroom. Like using AI to change headlines or to make pieces of content work for a more regionalized audience, as smaller, local newsrooms are few and far between.

An exchange of value: How journalists can shape AI development

Beckett reminds that it’s early days for AI in journalism, with what he described as a ‘Robo-journalist’ suite of tools potentially on the horizon. Google is reportedly building something with journalists in mind - dubbed Genesis - it works by inputting in facts and in return generating related news copy. Little else is known about Genesis at present.

One team working on something similar is AppliedXL - a real-time information company co-founded by Francesco Marconi, former R&D chief at The Wall Street Journal and author of the seminal work on AI in journalism: Newsmakers: Artificial Intelligence and the Future of Journalism.

Marconi’s team recently unveiled the first language model rooted in journalism - AXL-1, an experimental language model that turns structured data into concise news digests, which can be tailored to a specific industry.

Marconi told AI Business that while language models can be used to turn industry trends into succinct news summaries, AI systems need to have the ability to "capture human subtleties, diverse opinions, and current affairs is crucial. AI has the potential to process a much larger amount of information and from a much wider range of sources, and it can do so in an extremely efficient and comprehensive manner.

“AI systems can sift through millions of data points and documents to detect patterns, quantify perspectives, and validate occurrences. This can help determine whether a reported event or viewpoint is truly a statistical outlier or more commonplace.”

Marconi published his Newsmakers book in 2020 - back when there was no ChatGPT. OpenAI’s GPT-3 only came out in June of that year. But the former R&D chief noted the principles of his work - transparency, accuracy and reliability - are upheld when integrating AI into journalism.

Marconi said newsrooms looking to tools like ChatGPT should look to consistently integrate journalistic perspectives including embedding human checkpoints to assess and counteract potential biases.

There is, of course, an inherent tension between news organizations and AI developers - with news media often viewing tech platforms as “disruptors.”

Marconi expressed that while tensions are expected for an industry proud of its ways of working, it's essential to understand the potential benefits for both parties from genuine collaboration.

AI firms can offer technical infrastructure and know-how in return for data from news agencies along with their human insights and concepts of ethics to help guide such systems.

“A decline in investment in quality journalism equates to a reduced quality of data for AI systems,” he said. “This decline impacts not just the media sector but society at large.”

Automation with intention: Defining responsible AI-human collaboration

Marconi believes that as AI expands into the newsroom, it's incumbent upon humans to act as "stewards, ensuring that AI remains ethical, transparent and accurate."

And Beckett argues that conversations about robots taking over distract from other important issues around AI - like copyright, data privacy, competition. "The real debate is granular, gritty and difficult," he said.

Beckett’s ‘alpine walking stick’ analogy is apt in the sense that if it helps improve things - then why not use it? But he stressed to take time to understand potential implentations.

As Beckett states, news organizations should adopt AI responsibly, not rushing ahead before ensuring it provides value.

AI in the newsroom is inevitable. It was already here before ChatGPT, but now it’s entering a new era where smaller newsrooms can use it. Only through responsible development and transparent integration can media flourish from AI augmentation.

Read more about:

ChatGPT / Generative AIAbout the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)