Leaked: New Web Development Tools from Google

The highly anticipated GPT-4 rival, Google's Gemini, will power multimodal features in its developer platform

Google is adding tools to its web development platform that will enable users to develop apps by using natural language prompts. They also will get access to multimodal capabilities.

In a post on Medium, Javascript engineer Bedros Pamboukian claims to have obtained screenshots of new AI features coming to Makersuite. Among them is Gemini, Google's highly anticipated and yet unreleased multimodal AI model that will enable text, image and audio inputs and outputs on the platform.

The features have not been publicly announced yet and the screenshots, if true, show early builds as several aspects of the user interface appear incomplete.

AI Business has contacted Google for comment.

What’s been leaked?

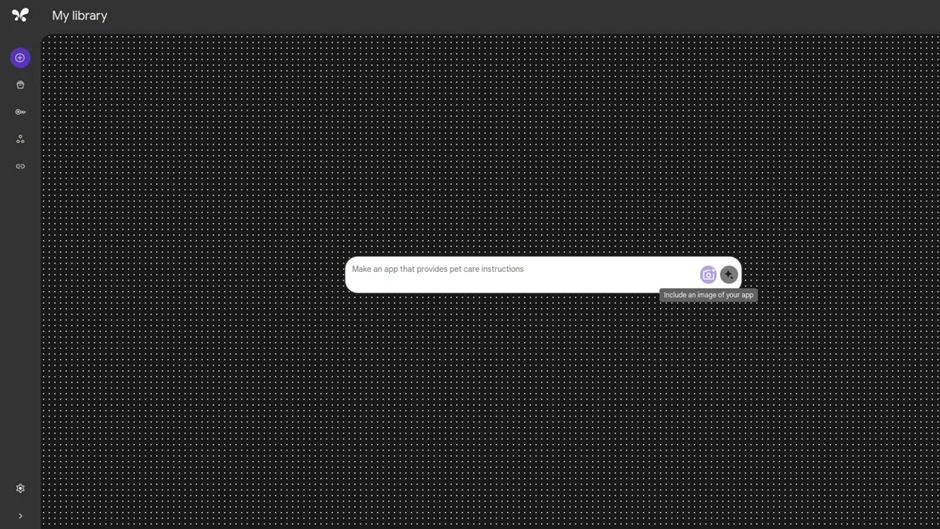

A handful of reported features were shown off. Chief among them was Stubbs, a way to easily create and share AI-generated app prototypes with minimal effort. If real, Stubbs looks to be a less complex way of developing web apps, suitable for non-technical users.

The Stubbs workspace on Makersuite

There’s also Stubbs Gallery, which would allow MakerSuite users to view other Stubbs and rework them for their needs. According to the leak, your Stubbs will not be made public by default; users have the option to make them viewable to other users.

Gemini, Google's next-generation large language model is also listed under the codename 'Jetway’ for MakerSuite integrations. It will reportedly power multimodal capabilities, including text recognition, object recognition, captioning and understanding images – meaning users can use images, videos and even HTML in prompts. The author said Gemini will also be integrated into Vertex AI, Google’s application development environment.

Also on its way are an autosave feature for MakerSuite, translation support for non-English prompts and a Google Drive integration to allow users to easily import images and files directly into the editor.

Google Gemini: What we know so far

Google has been teasing Gemini since it was announced at the company’s I/O event in May. There, Google CEO Sundar Pichai said that in early tests of the model the company witnessed “impressive multimodal capabilities not seen in prior models.”

Gemini has been highly anticipated among the AI community, billed as the true rival to OpenAI’s ChatGPT. The Google DeepMind team, which combined both the Brain Team and DeepMind, are working on the model.

Details have been sparse. We know for certain that Gemini is multimodal, meaning it can handle inputs/outputs for text, videos and images. It can also tap into other tools and APIs.

There is no definitive timeline for when Gemini might be released. A small number of companies have been given access, with The Information reporting Gemini will be made available to companies in varying sizes via Vertex AI and its developer environment platforms.

Easing access to app creation

There is growing interest in using AI to improve web app development, specifically enhancing access.

Google is working on a new development environment that gives users AI tools to try out, dubbed Project IDX. There’s also MetaGPT, which allows users to use natural language to build apps, and other tools like GitHub Copilot and Replit AI.

And just this week, a former Google engineer proposed a novel way to build and run AI-powered web apps locally on-device, rather than in the cloud.

If the presumed addition of Stubbs holds true, it could “open the floodgates for the consumer, prosumer and even ISV marketplace with far more creators looking to Google’s low- or no-code AI tooling than ever before,” according to Bradley Shimmin, chief analyst for AI and data analytics at sister research firm Omdia.

The realities of leaks in tech

Nothing in the blog has been verified by Google. As for the origin of the screenshots, Pamboukian said the images “are not from any external source."

He does not mention how he got access to these unreleased features – AI Business has reached out to Google to confirm.

The reality is that developers can unearth as yet unreleased features just by digging. In June, a developer leaked that Instagram was working on AI chatbots – a whole three months before Meta CEO Mark Zuckerberg unveiled chatbots with personalities for the company’s apps at Connect 2023.

Read more about:

ChatGPT / Generative AIAbout the Author(s)

You May Also Like

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=700&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)

.jpg?width=300&auto=webp&quality=80&disable=upscale)